- Home

- AI & Machine Learning

- Avoiding Proxy Discrimination in LLM-Powered Decision Systems

Avoiding Proxy Discrimination in LLM-Powered Decision Systems

When an AI system denies someone a loan, rejects a job application, or flags their content for removal, it’s easy to assume the decision is based on clear, objective rules. But what if the system isn’t looking at race, gender, or age-and still discriminates? That’s the hidden danger of proxy discrimination in large language model (LLM) systems. It doesn’t use protected traits directly. Instead, it finds sneaky substitutes-features that act like stand-ins for them-and makes decisions that hurt certain groups, even when no one meant for it to happen.

What Exactly Is a Proxy in AI?

A proxy is anything that correlates with a protected characteristic, even if it’s not that characteristic itself. Think of it like this: if you’re trying to guess someone’s race based on their zip code, you’re using a proxy. In the U.S., historical redlining and housing segregation mean certain zip codes still strongly correlate with racial demographics. An LLM trained on loan application data might learn that applicants from ZIP code 19143 are 60% less likely to repay loans. It doesn’t know those people are Black. But it doesn’t need to. The pattern is enough.LLMs are especially dangerous here because they digest everything-social media posts, job histories, credit reports, even the wording of cover letters. They don’t just look at numbers. They read tone, word choice, education history, and employment gaps. And they find patterns humans would never notice. A 2024 study from Stanford showed that LLMs used in hiring could predict gender with 87% accuracy just from the names of universities listed on resumes, even when gender wasn’t provided as input. Why? Because some schools still have gender-skewed enrollment rates decades after coeducation.

Why Proxy Discrimination Is Hard to Catch

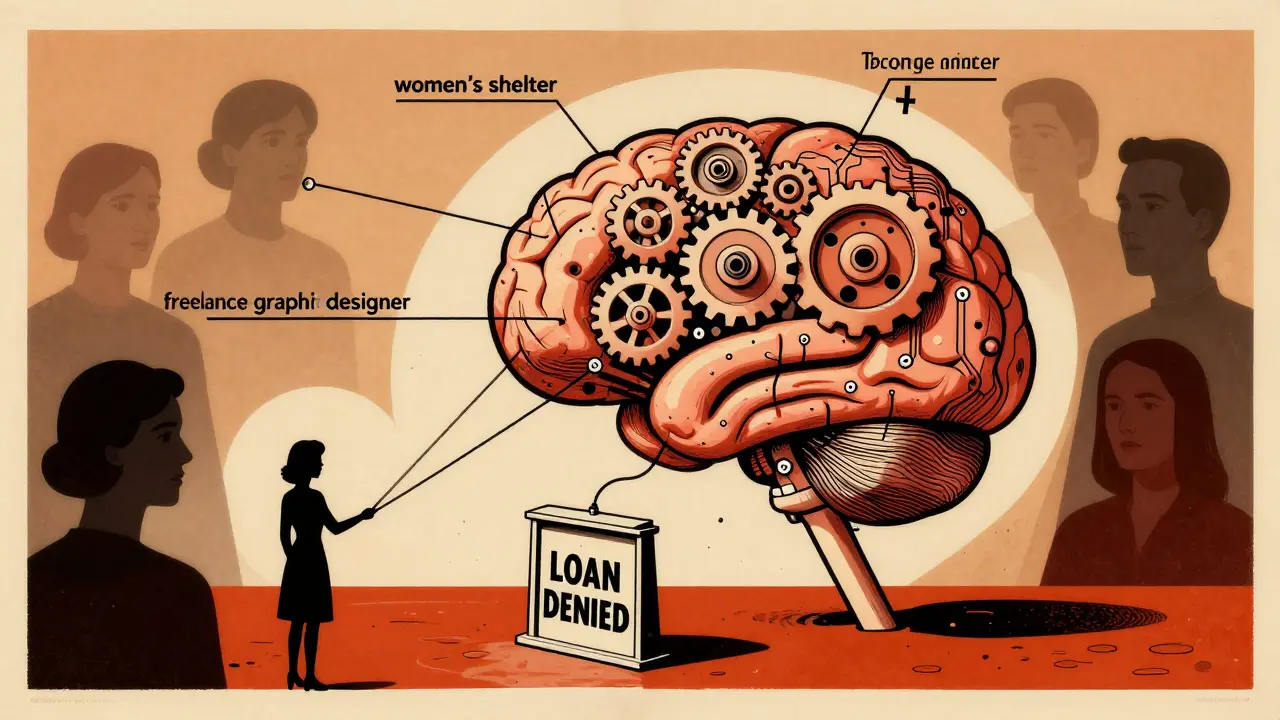

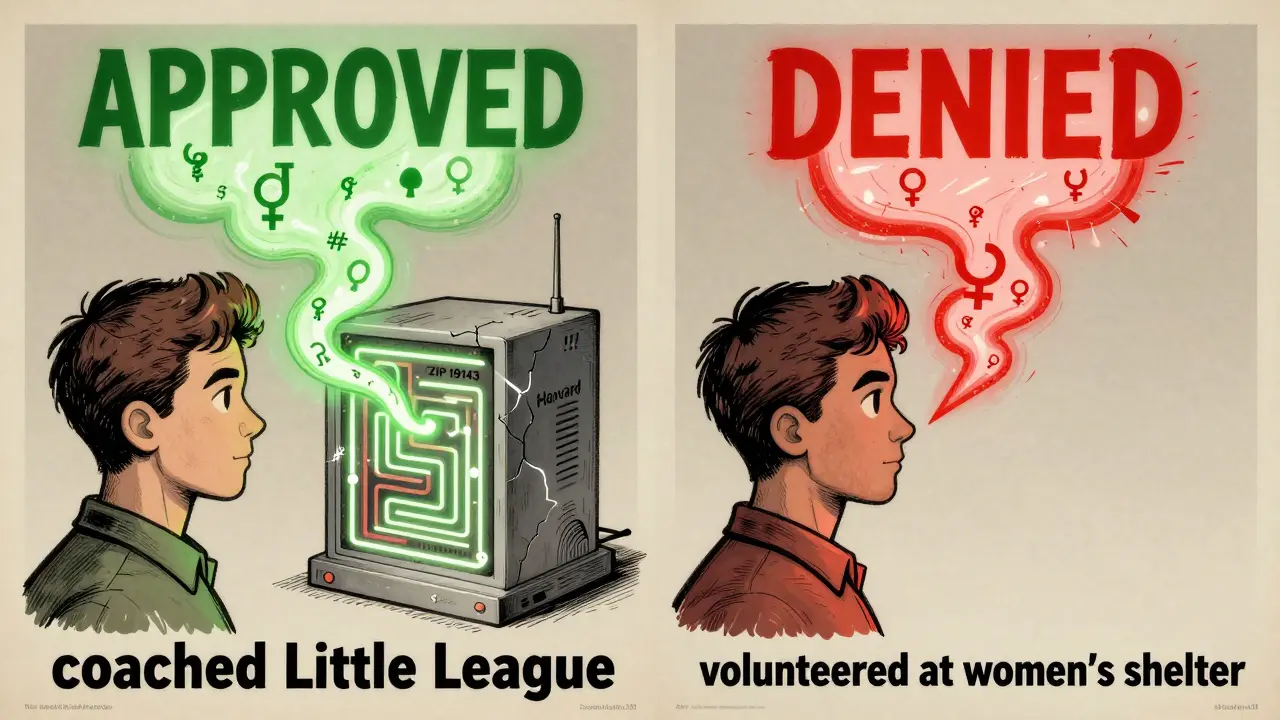

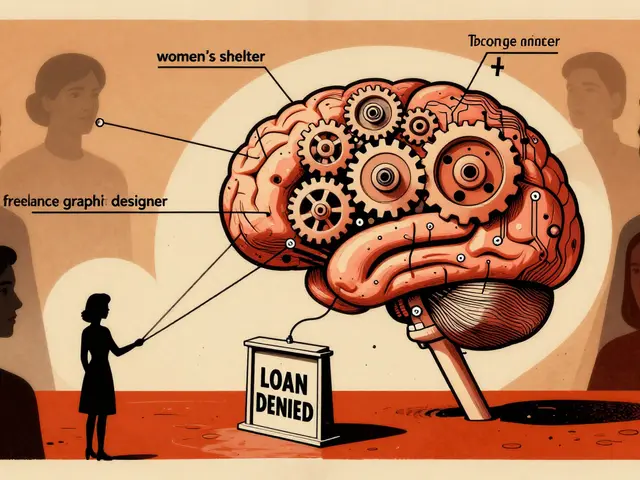

Most fairness tools today look at group-level outcomes: "Are women approved for loans at the same rate as men?" That’s useful-but it misses the real problem. Proxy discrimination doesn’t always show up in group averages. It hides in the edges.Imagine two applicants: Maria and John. Both have identical income, credit scores, and employment history. But Maria’s application mentions she volunteered at a women’s shelter. John’s says he coached his son’s Little League team. The LLM doesn’t see gender. But it sees "women’s shelter" and connects it to other data: women who mention volunteering there are 40% more likely to be single parents, and single parents in this region have a 22% higher default rate on auto loans. So it downgrades Maria’s score. John gets approved. No explicit bias. No protected attribute used. But the outcome is still discriminatory.

This is why traditional audits fail. If you only check overall approval rates by gender, you’ll see no disparity. But if you look at individual decisions, you’ll find Maria’s case was flagged because of a single phrase. That’s proxy discrimination-and it’s invisible to most compliance tools.

How LLMs Find Proxies You Didn’t Know Existed

LLMs don’t just use obvious data. They build latent features-hidden patterns in the data that humans can’t even describe. A model might learn that people who use certain punctuation (like multiple exclamation points) are more likely to be young women, and that young women in your region are less likely to repay personal loans. So it penalizes exclamation points. No one told it to. No one even noticed. But the model figured it out.Here’s another real example from a 2025 audit of a U.S.-based credit scoring LLM: applicants who listed "freelance graphic designer" as their occupation were 35% less likely to be approved than those who listed "marketing manager," even with identical income. Why? Because the model correlated freelance work with instability. But deeper analysis showed that 78% of freelance graphic designers in the training data were women, and women were historically underrepresented in formal financial records. The model didn’t target gender. It targeted a job title that, in practice, acted as a gender proxy.

These proxies are often intersectional, too. A Black woman from a low-income neighborhood might be penalized not because of race, gender, or income alone-but because the model has learned that the combination of all three predicts risk. And since these patterns are buried in thousands of data points, no human could reverse-engineer them without tools.

How to Detect Proxy Discrimination in LLM Systems

There’s no single fix. But there are proven methods that work together.- Use abductive explanations-not just accuracy scores. Instead of asking, "Did the model make the right call?" ask, "What specific features caused this decision?" Formal methods can trace each output back to the features that were necessary and sufficient to produce it. If every explanation for a denied loan includes a feature that correlates with a protected attribute (like university name, job title, or even punctuation), then it’s proxy discrimination-even if the attribute itself wasn’t used.

- Test at the individual level. Aggregate fairness metrics (like equal opportunity difference) are too blunt. You need to simulate what happens if you change one protected attribute-say, swap Maria’s gender in the system-and see if the decision flips. If it does, the system is biased. This is called counterfactual fairness, and it’s the most reliable way to catch hidden proxies.

- Integrate domain knowledge. Don’t let the model guess on its own. Provide it with known correlations: "ZIP code X correlates with race in this region," or "Job title Y is 80% held by women in this state." The system can then adjust for these relationships instead of reinforcing them.

- Monitor continuously. Proxies don’t stay the same. As societal patterns shift, so do the features the model picks up. A 2023 audit found that after TikTok became popular among Gen Z, LLMs started using mentions of TikTok usernames as proxies for age and socioeconomic status. That wasn’t in the training data-it emerged after deployment.

Why Legal Frameworks Are Falling Behind

Current anti-discrimination laws were built for human decision-makers. They require proof of intent. But LLMs don’t have intent. They have patterns. And courts don’t yet have a clear way to define when a correlation becomes discrimination.Take the case of a healthcare LLM that denied preventive care to patients with certain dietary keywords in their medical notes (like "vegan" or "organic groceries"). The system didn’t know the patients were young women. But 82% of those patients were women aged 18-30, and the model had learned that these patients were less likely to follow up on referrals. So it deprioritized them. No one at the company meant to discriminate. No one even knew the model was making that connection. Under current law, there’s no legal violation-because there’s no evidence of discriminatory intent.

This creates a dangerous loophole. Companies can deploy LLMs that systematically disadvantage protected groups-and walk away claiming they didn’t know. The burden of proof is on the victim to show harm, but they can’t see how the system works. That’s not justice. It’s technical immunity.

What Organizations Can Do Right Now

You don’t need a PhD in machine learning to start fixing this. Here’s what works:- Stop trusting black boxes. If your LLM can’t explain why it denied someone, don’t use it for high-stakes decisions.

- Require explainability by design. Choose tools that generate human-readable rationales-not just confidence scores. If the system says, "Denied due to inconsistent employment history," ask: "What specific data points led to that conclusion?"

- Build diversity into your training data. If your data only includes applicants from one region or demographic, the model will learn to favor that group. Actively include underrepresented voices in your training sets.

- Test for proxy effects before launch. Run counterfactual simulations: change gender, race, age, and location in synthetic profiles. See if outcomes shift. If they do, you have a proxy problem.

- Partner with ethicists, not just engineers. Bias isn’t a technical glitch. It’s a social one. Include sociologists, legal experts, and community advocates in your review process.

The Bottom Line

Proxy discrimination isn’t about bad actors. It’s about systems that learn too well. LLMs are designed to find patterns-even the ones we don’t want them to see. And because those patterns are hidden, they’re easy to ignore until someone gets hurt.The solution isn’t to stop using AI. It’s to build systems that don’t just predict outcomes-but question whether those outcomes are fair. If you can’t explain why a decision was made, you shouldn’t be making it. And if your model is using zip codes, job titles, or punctuation as stand-ins for race or gender, you’re not building fair AI. You’re just automating bias.

Can proxy discrimination happen even if protected attributes are removed from the data?

Yes. Removing race, gender, or age from the input data doesn’t stop proxy discrimination. LLMs can infer these traits from other data points like zip code, job title, university name, or even writing style. For example, a model might link "Harvard" with higher income and loan approval rates-but if Harvard’s student body has historically been more male and white, the model learns to favor applicants from that school as a proxy for gender and race, even without those attributes being directly fed in.

How is proxy discrimination different from statistical bias?

Statistical bias looks at group-level disparities: "Do women get approved less often?" Proxy discrimination looks at individual decisions and asks: "Was this outcome influenced by a hidden feature that correlates with a protected trait?" Two systems can have the same overall approval rates but differ wildly in how they treat individuals. One might be fair on average but systematically disadvantage certain people through proxies. That’s why group metrics alone are misleading.

Can LLMs be trained to avoid proxy discrimination?

Yes, but not by accident. You need to actively design for fairness. This includes using counterfactual testing, integrating known social correlations into the model’s reasoning, and requiring interpretable decision paths. Simply retraining on "fairer" data won’t work if the underlying patterns still exist. The model must be forced to question its own assumptions, not just repeat them.

What’s the biggest mistake companies make when trying to fix AI bias?

They focus only on removing obvious protected attributes and assume that’s enough. The real problem isn’t what’s in the data-it’s what the model infers from what’s left. Companies often skip deep audits, ignore individual-level testing, and rely on vendor claims of "bias-free" models. That’s like assuming a car is safe because it doesn’t have a red flag. You still need to check the brakes.

Are there tools available to detect proxy discrimination?

Yes, but they’re still emerging. Tools like Aequitas, Fairlearn, and IBM’s AI Fairness 360 offer some capabilities, but most are designed for statistical fairness, not proxy detection. The most promising approach uses abductive reasoning frameworks that trace decision paths backward to identify necessary features. These require technical expertise but are being adopted by forward-thinking financial institutions and public sector agencies in the U.S. and EU.

Susannah Greenwood

I'm a technical writer and AI content strategist based in Asheville, where I translate complex machine learning research into clear, useful stories for product teams and curious readers. I also consult on responsible AI guidelines and produce a weekly newsletter on practical AI workflows.

Popular Articles

5 Comments

Write a comment Cancel reply

About

EHGA is the Education Hub for Generative AI, offering clear guides, tutorials, and curated resources for learners and professionals. Explore ethical frameworks, governance insights, and best practices for responsible AI development and deployment. Stay updated with research summaries, tool reviews, and project-based learning paths. Build practical skills in prompt engineering, model evaluation, and MLOps for generative AI.

Proxy discrimination is one of those invisible harms that only becomes visible when someone gets crushed by it. The example with the women’s shelter volunteer is chilling-not because it’s malicious, but because it’s so logically consistent. The system didn’t need to know Maria was a woman; it just needed to know what patterns her words triggered. This isn’t a bug. It’s a feature of how LLMs optimize for correlation, not ethics.

We need to stop treating AI fairness as a technical problem. It’s a moral one. If we can’t explain why a decision was made, we shouldn’t make it. Period.

And yes, removing protected attributes is like locking the front door while leaving the window wide open. The model doesn’t care about our intentions. It only cares about what it can infer.

This is real. I saw a friend get denied a small business loan because her resume said she worked at a nonprofit. The system flagged it as ‘unstable income.’ She was a teacher. No one told them nonprofits aren’t unstable.

Okay, so let me get this straight-AI is basically reading between the lines of our lives like it’s some kind of psychic detective, and then making life-or-death calls based on… punctuation? University names? Volunteering at a women’s shelter?

Bro. We built a machine that’s more invasive than your ex’s therapist. And we’re surprised it’s biased?

I’m not mad. I’m just… deeply disappointed. Like, we could’ve built a system that helps people. Instead, we gave it a PhD in human prejudice and called it ‘innovation.’

Oh wow, another ‘AI is racist’ thinkpiece. Let me guess-you also think cats are secretly plotting world domination? This whole proxy discrimination thing is just statisticians crying because their regression models got too good.

Here’s the truth: if you’re getting denied a loan because you used too many exclamation points or went to a ‘gender-skewed’ university, maybe you’re just not a good candidate. The model isn’t racist-it’s *realistic*. The data doesn’t lie. You do.

And don’t even get me started on ‘counterfactual fairness.’ That’s just virtue signaling wrapped in math. If you want fairness, stop applying for loans in the first place. Or better yet-get a job that doesn’t require AI approval.

Think of proxy discrimination as the ghost in the machine’s soul. It’s not the data that’s evil-it’s the silence between the numbers. The unspoken histories. The redlined streets, the gendered universities, the forgotten labor of women who cleaned homes while men built empires.

The LLM didn’t learn bias. It remembered it. It inherited it. Like a child raised in a house where love was conditional, it learned to read the air-not the words.

We don’t need more audits. We need a reckoning. Not with code-but with the world that coded us.

And if you think fairness is a technical problem? You’re still sleeping. The revolution won’t be algorithmic. It will be existential.