How Data Analysts Automate Reporting Dashboards with Vibe Coding Tools

Discover how data analysts are using vibe coding tools like Glide and Bubble to automate reporting dashboards. Learn the benefits, top platforms, and step-by-step implementation guide.

Context Windows in LLMs: Limits, Trade-Offs, and Best Practices for 2026

Explore the limits, trade-offs, and best practices for managing context windows in Large Language Models (LLMs) in 2026. Learn how to optimize token usage, reduce costs, and improve accuracy with RAG and chunking strategies.

Multi-Agent Systems with LLMs: Collaboration and Role Specialization Guide

Explore how Multi-Agent Systems with LLMs transform AI by enabling specialized roles and collaboration. Compare frameworks like Chain-of-Agents, MacNet, and LatentMAS for efficient, scalable solutions.

Human-in-the-Loop Review for Generative AI: Catching Errors Before Users See Them

Discover how Human-in-the-Loop (HITL) review systems catch generative AI hallucinations before they reach users. Learn the costs, benefits, and best practices for implementing HITL in high-stakes industries like healthcare and finance.

HR Automation with Generative AI: Job Descriptions, Interview Guides, and Onboarding

Discover how generative AI automates job descriptions, interview guides, and onboarding. Learn to cut admin time by 80%, reduce bias, and boost candidate satisfaction with proven strategies and tool comparisons.

Documentation Standards for Prompts, Templates, and LLM Playbooks: A Governance Guide

Learn how to implement documentation standards for prompts, templates, and LLM playbooks. Compare frameworks like CAP and Devin AI, explore tools like Waybook, and ensure AI governance compliance.

How to Capture Project Style Guides in System Prompts for Consistency

Learn how to embed project style guides into system prompts for consistent AI output. Discover best practices for structure, length, and testing to improve brand voice and formatting accuracy.

Safety and Harms Evaluation for Large Language Models in Production: A Practical Guide

A practical guide to evaluating LLM safety in production, covering key frameworks like HELM and CASE-Bench, regulatory compliance with the EU AI Act, and strategies to mitigate real-world harms.

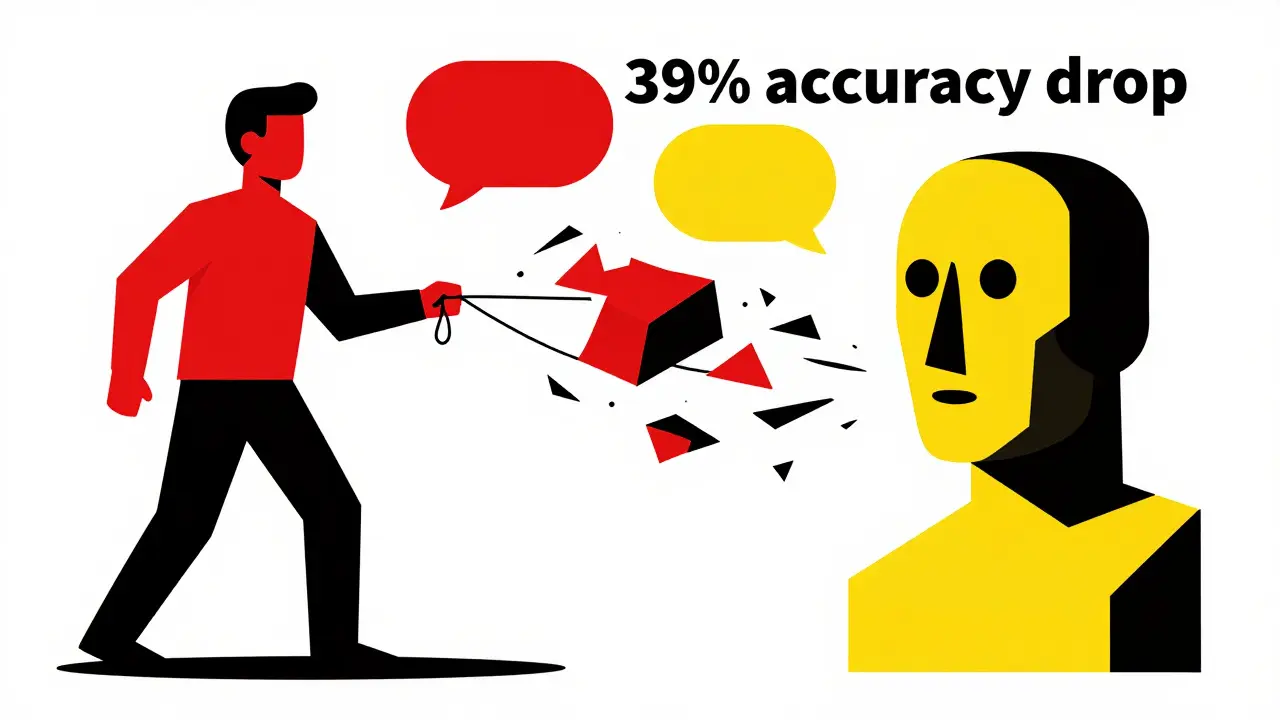

Multi-Turn Conversations with LLMs: How to Manage Conversation State Without Getting Lost

LLMs lose 39% accuracy in long chats. Learn how to manage conversation state using loss masking, Review-Instruct, and structured data to keep AI bots coherent and reliable.

Verification for Generative AI Agents: Guarantees, Constraints, and Audits

Explore how formal verification, constraints, and blockchain audits are transforming generative AI from risky experiments into trusted, compliant enterprise tools.

Natural Language to Schema: Prompting Databases and ER Diagrams

Learn how to use Natural Language to Schema (NL2Schema) to prompt databases and generate ER diagrams. We cover best practices, accuracy benchmarks, and implementation tips for 2026.

E-commerce Visuals with Multimodal Generative AI: Lifestyle Shots and Variants

Discover how multimodal generative AI transforms basic product photos into high-converting lifestyle shots. Learn about costs, limitations, and best practices for e-commerce brands.