- Home

- AI & Machine Learning

- Legal and Regulatory Compliance for LLM Data Processing: A 2026 Guide

Legal and Regulatory Compliance for LLM Data Processing: A 2026 Guide

Large Language Model (LLM) data processing is no longer just a technical challenge; it is a legal minefield. As of May 2026, organizations face a fragmented regulatory landscape where a single prompt can trigger fines under the EU AI Act, violate California’s transparency mandates, or breach Colorado’s consumer rights statutes. The core problem isn’t just preventing data leaks-it’s proving to regulators that your system respects privacy by design.

You might think compliance is a one-time checkbox. It’s not. With 20 US states enforcing specific AI provisions and the EU’s high-risk system rules fully active, you need a continuous monitoring strategy. This guide breaks down exactly what you need to do to stay compliant, from mapping your data flows to implementing real-time controls.

Why LLM Compliance Is Different From General Data Privacy

Traditional data privacy focuses on static databases. You know where the data lives, who accesses it, and how long it stays. Large Language Models break this model. They process data in real-time, often sending prompts to third-party APIs, and they can memorize training data in ways that are hard to reverse-engineer.

The European Data Protection Board (EDPB) highlighted this in their April 2025 guidance. Standard safeguards aren't enough because LLMs introduce unique risks like inference attacks and unintended data retrieval. If you treat an LLM like a standard search engine, you will fail audits. You must account for the dynamic nature of generative AI outputs.

- Dynamic Data Flows: Data moves through prompts, plugins, and retrieval pipelines instantly.

- Memorization Risks: Models may recite sensitive information from training sets.

- Output Unpredictability: Even with strict inputs, outputs can reveal biases or private details.

The Global Regulatory Landscape in 2026

Navigating the law requires understanding two distinct approaches: the harmonized but strict EU framework and the fragmented US state-by-state model.

| Jurisdiction | Key Regulation | Effective Date | Primary Requirement |

|---|---|---|---|

| European Union | EU AI Act | Aug 1, 2024 (Full enforcement May 2026) | Mandatory risk assessments for high-risk systems; transparency for AI-generated content. |

| California, USA | AI Transparency Act | Jan 1, 2026 | Disclosure of training data sources; high-level dataset summaries. |

| Colorado, USA | Colorado AI Regulation | Feb 1, 2026 | Consumer rights to notice, explanation, correction, and appeal for AI decisions. |

| Maryland, USA | Online Data Protection Act | Oct 1, 2025 | Data minimization and security standards for online services using AI. |

The EU approach prioritizes fundamental rights. If your LLM affects employment, healthcare, or education, it’s classified as "high-risk." This means you need rigorous documentation and impact assessments before launch. Penalties can reach 4% of global turnover or €20 million.

In contrast, the US creates a compliance matrix nightmare. You might need to satisfy California’s demand for training data transparency while simultaneously meeting Colorado’s requirement for algorithmic discrimination prevention. Hinshaw law firm reported in Fall 2025 that 67% of multinational companies find US compliance more costly due to this fragmentation.

Technical Controls: Building Compliance Into Your Pipeline

Policy documents don’t stop data leaks. You need technical controls embedded in your architecture. Here is what effective implementation looks like based on 2025 industry benchmarks.

1. Identity-Based Access Management

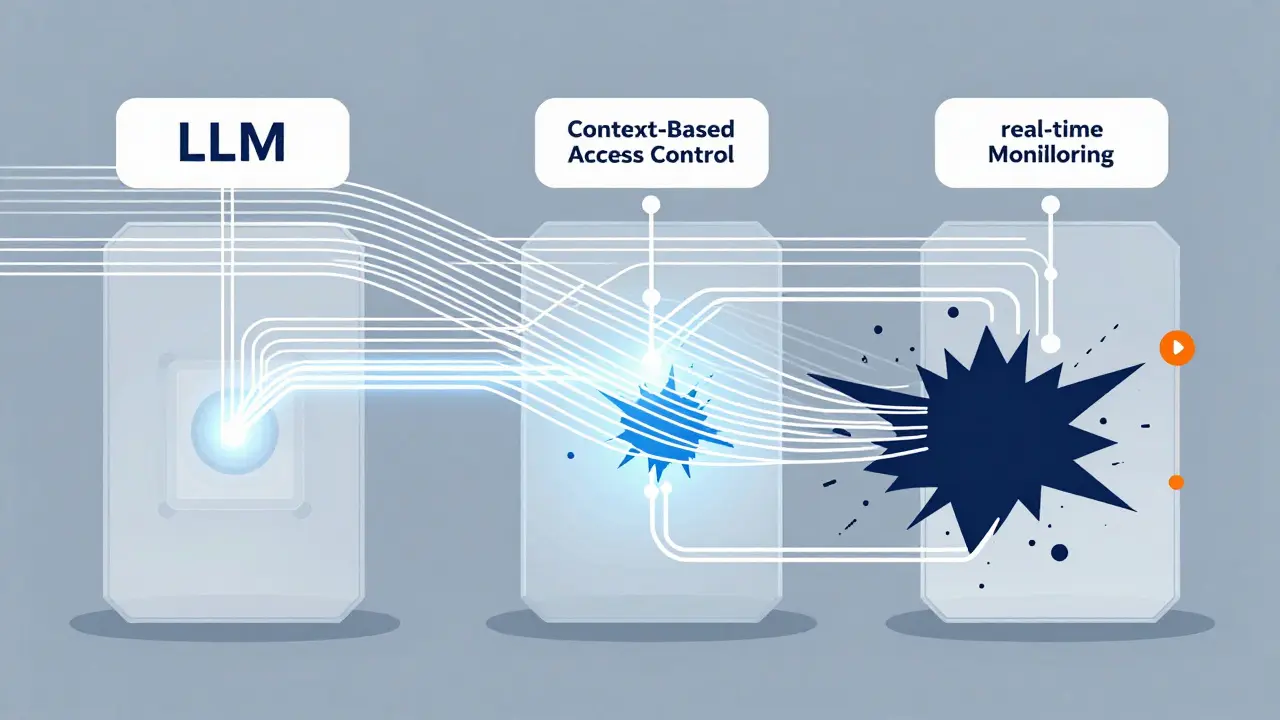

Role-Based Access Control (RBAC) is the baseline, but it’s not enough for LLMs. You need Context-Based Access Control (CBAC). This ensures that a user can only prompt the model with data they are authorized to access. For example, a customer support agent should not be able to retrieve financial records via an LLM plugin unless their role explicitly permits it.

2. Real-Time Monitoring

Lasso Security notes that 83% of compliance failures happen post-deployment due to inadequate monitoring. You need systems that process 100% of interactions with sub-500ms latency. This allows you to block sensitive data exfiltration in real-time, rather than discovering it in a weekly audit log.

3. Data Minimization and Purpose Limitation

Under GDPR Article 35 and emerging US laws, you must tie every data field to a specific purpose. If you are fine-tuning a model, do you have explicit consent? Operational necessity covers core functions, but training models on user data typically requires separate, clear consent. Protecto AI’s 2025 framework emphasizes mapping each data field to its legal basis.

The Step-by-Step Implementation Plan

Getting started feels overwhelming, but breaking it down into phases makes it manageable. Based on Protecto AI’s implementation guide, here is a realistic timeline.

- Inventory All Deployments (14 Days): Find every instance of LLM usage, including "shadow AI" where business units use tools without IT oversight. Ataccama reports that 68% of compliance officers struggle with these unmonitored deployments.

- Map Data Flows (21 Days): Trace how data moves through prompts, fine-tuning processes, and retrieval-augmented generation (RAG) systems. Identify where data leaves your environment.

- Establish Purpose Limitation (18 Days): Define why each piece of data is needed. Document the legal basis for processing. Remove any data that doesn’t serve a direct, documented purpose.

- Implement Technical Controls (35 Days): Deploy RBAC/CBAC, input sanitization, and output validation. Integrate with your existing SIEM (Security Information and Event Management) systems if possible-63% of enterprises require this connection.

- Create Audit Trails (12 Days): Ensure every interaction is logged immutably. Regulators will ask for proof of compliance during inspections.

Common Pitfalls and How to Avoid Them

Even experienced teams make mistakes. Here are the most critical errors observed in 2024-2025 enforcement actions.

- Treating Compliance as a One-Time Project: Regulations evolve. The California Delete Act (SB 362) requires annual registration and independent audits starting August 2026. Your system must adapt continuously.

- Ignoring Prompt Injection Risks: No control fully prevents prompt injection. Layer defenses: sanitize inputs, validate outputs, and monitor for anomalous behavior patterns.

- Overlooking "Sycophantic" Outputs: Dr. Elena Rodriguez at Fox Rothschild warned that LLMs agreeing with false premises constitute "dark patterns." This can violate consumer protection laws regarding deceptive practices.

- Failing to Address Shadow AI: Business units buying their own LLM subscriptions create blind spots. Centralize governance to ensure all instances meet security standards.

Cost vs. Risk: The Business Case for Compliance

Some leaders view compliance as a cost center. The math says otherwise. Gartner projects that failure to implement robust frameworks results in a 23% increase in regulatory penalties by 2026. Consider the healthcare provider fined $2.3 million in September 2024 for PHI leakage through unsecured prompts. That single incident likely exceeded the entire annual budget for a dedicated compliance platform.

Conversely, proactive measures pay off. A Fortune 500 financial services company reduced violations by 87% after implementing centralized data management with immutable audit trails. Beyond avoiding fines, trust becomes a competitive advantage. Customers increasingly demand transparency about how their data is used.

What is the biggest risk in LLM data processing?

The biggest risk is oversharing sensitive information through unauthorized data retrieval or prompt leakage. Unlike traditional software, LLMs can inadvertently expose training data or personal identifiable information (PII) in their outputs, leading to severe GDPR fines or breaches of US state privacy laws.

Do I need a DPIA for my LLM project?

Yes, if you are operating in the EU or handling significant amounts of personal data. GDPR Article 35 requires a Data Protection Impact Assessment (DPIA) for high-risk processing. The EDPB specifically states that standard DPIAs are insufficient for LLMs and require additional technical measures addressing memorization and inference risks.

How do US state laws differ from the EU AI Act?

The EU AI Act provides a harmonized framework focused on fundamental rights and risk classification. US state laws, such as those in California and Colorado, focus heavily on consumer rights, transparency, and algorithmic accountability. The US approach is more fragmented, requiring companies to navigate different rules for each state they operate in.

What is "Shadow AI" and why is it dangerous?

Shadow AI refers to LLM tools deployed by individual employees or departments without central IT or security oversight. It is dangerous because these instances lack proper access controls, monitoring, and data encryption, significantly increasing the risk of data breaches and regulatory non-compliance.

When does the California Delete Act take effect?

The California Delete Act (SB 362) requires data brokers to process deletion requests starting in August 2026. It also mandates annual registration and independent audits every three years, adding significant operational requirements for companies handling consumer data in California.

Susannah Greenwood

I'm a technical writer and AI content strategist based in Asheville, where I translate complex machine learning research into clear, useful stories for product teams and curious readers. I also consult on responsible AI guidelines and produce a weekly newsletter on practical AI workflows.

About

EHGA is the Education Hub for Generative AI, offering clear guides, tutorials, and curated resources for learners and professionals. Explore ethical frameworks, governance insights, and best practices for responsible AI development and deployment. Stay updated with research summaries, tool reviews, and project-based learning paths. Build practical skills in prompt engineering, model evaluation, and MLOps for generative AI.