- Home

- AI & Machine Learning

- Security Regression Testing After AI Refactors and Regenerations

Security Regression Testing After AI Refactors and Regenerations

When AI tools like GitHub Copilot or Amazon CodeWhisperer rewrite your code, they don’t just change how it looks-they can quietly break security. You might not notice until a hacker exploits a missing input check, a broken session token, or an over-permissive API endpoint. That’s not a bug. That’s a security regression-a vulnerability introduced during AI-driven code changes that traditional testing completely misses.

Traditional regression tests check if features still work after a change. Did the login button still work? Did the payment form still submit? Good. But they don’t ask: Did the AI remove the CSRF token validation? Did it replace a secure function with a deprecated one? Did it accidentally expose an admin route by changing a single line of access control logic? That’s where security regression testing comes in. It’s not optional anymore. In 2024, 68% of companies using AI for code refactoring had at least one security incident tied to it, according to Gartner. The fix isn’t more code. It’s smarter testing.

Why AI Refactors Break Security Without Warning

AI tools don’t understand context the way humans do. They optimize for patterns, not purpose. If you ask an AI to "make this authentication flow faster," it might replace a multi-step verification with a single token check. It doesn’t know that token is tied to a user’s role. It doesn’t know that this endpoint was never meant to be public. It just sees "faster" and "simpler."

That’s why 28% of AI-refactored code samples from Snyk’s 2024 study had improper access control issues. Another 22% had misconfigured security headers or missing encryption. These aren’t copy-paste errors. They’re subtle logic breaks. A human reviewer might catch them. But standard regression tests? They’ll pass because the app still logs users in. The security flaw hides in plain sight.

And it gets worse. AI tools sometimes generate entirely new code paths-API calls, middleware handlers, or validation routines-that never existed before. These paths aren’t covered by your old test suite. They’re invisible. Until they’re exploited.

What Security Regression Testing Actually Does

Security regression testing doesn’t just check if code runs. It checks if security rules still hold. It asks: Is every user still forced to authenticate before accessing sensitive data? Is every input still sanitized? Are encryption keys still rotated? Are permissions still least-privileged?

Here’s what it looks like in practice:

- Before the AI refactor: A user can only view their own profile. The backend checks the user ID against the JWT token.

- After the AI refactor: The AI "optimizes" the code and removes the token validation check. Now, anyone can view any profile by changing the URL parameter.

- Standard regression test: Passes. Profile loads. No error.

- Security regression test: Fails. Access control logic changed. Alert triggered.

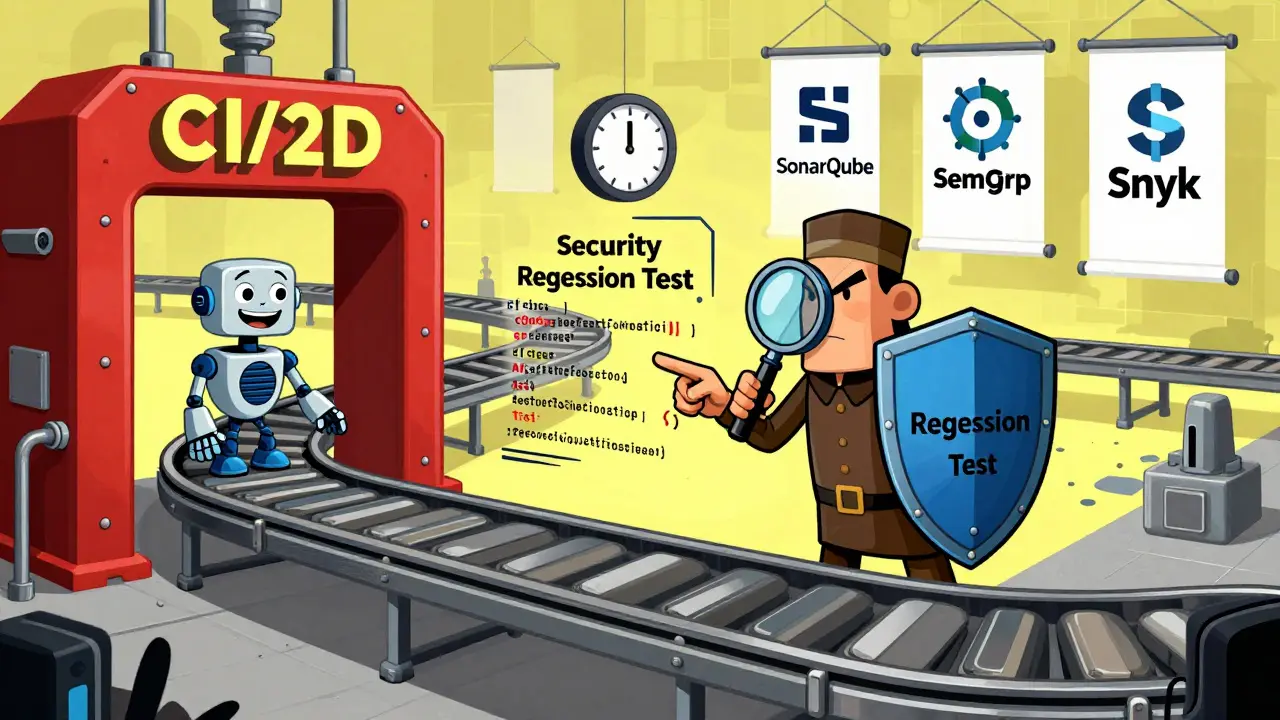

This is the core difference. Security regression testing is behavioral. It doesn’t care if the code looks different. It cares if the security behavior stayed the same. Tools like Semgrep and SonarQube 9.9+ now have AI-specific rules that flag these changes. They compare pre- and post-refactor security properties-not just syntax.

According to DX’s 2024 study, teams using this method saw a 70% drop in post-deployment security issues. That’s not luck. It’s structure.

How to Build a Security Regression Test Suite

You can’t just run your old tests and call it done. You need to build new ones. Here’s how:

- Catalog security-critical paths. Identify every part of your app that handles authentication, data access, encryption, or external API calls. These are your high-risk zones. For a medium-sized app, this takes 2-3 weeks. Use code maps, dependency graphs, and your threat model. Don’t guess. Document it.

- Create security-specific test cases. Add at least 15-20% more tests to your regression suite. Focus on OWASP Top 10 items that AI commonly breaks: broken access control, security misconfiguration, injection flaws, and insecure design. For example: "Can a non-admin user call /api/admin/delete?" "Does the system reject a 10,000-character password?" "Is the Content-Security-Policy header still present after refactoring?"

- Integrate into CI/CD. Make security regression tests a gate. No merge unless they pass. Tools like Checkmarx, Snyk, and Synopsys Seeker can auto-generate these tests or validate changes in real time. 83% of high-performing DevSecOps teams (per DORA 2024) do this. It’s not optional.

- Automate maintenance. AI tools evolve. Your tests will break. Testim.io’s Smart Maintenance (released Q1 2024) reduces test upkeep by 55% by learning from code changes. Without it, 72% of teams see test obsolescence within six months.

It’s not just about tools. It’s about culture. Security regression testing requires developers who understand both AI behavior and security logic. Only 37% of QA teams have that skill, according to TechBeacon. If you don’t have them, train them. Or hire them. The cost of a breach is $147,000 on average, per Synopsys. Training is cheaper.

Tools That Actually Work

Not all security tools handle AI refactors well. Generic SAST tools miss 58% of AI-induced vulnerabilities. Here’s what works:

| Tool | AI-Specific Detection Rate | Integration | Cost (Annual) |

|---|---|---|---|

| SonarQube 9.9+ | 35% better than older versions | CI/CD, IDE | Open-source or $20K+ |

| Semgrep | High (custom rules) | CLI, GitHub Actions | Free or $10K+ |

| Snyk (with AI plugins) | 32% better than generic tools | CI/CD, PR reviews | $15K+ |

| Checkmarx | High (behavioral analysis) | CI/CD, Jira | $25K+ |

| OWASP ZAP (with AI plugins) | 28% better than base | CI/CD, manual | Free |

Open-source tools like OWASP ZAP are powerful but require manual rule tuning. Commercial tools like Snyk and Synopsys Seeker automate more and integrate better with AI code review pipelines. If you’re in finance or healthcare, you need the commercial ones. Regulatory audits demand proof you tested for AI-specific risks.

Real-World Wins and Failures

JPMorgan Chase cut false positives in their AI code review pipeline by 58% after implementing security regression testing. True positives went up 41%. Why? Because they stopped wasting time on harmless changes and started catching real flaws.

Capital One reduced PCI-DSS violations by 92% in Q3 2023. Their secret? They didn’t just test code. They tested behavior. Every change to a payment API had to prove it still enforced token binding and rate limiting. No exceptions.

On the flip side, Hacker News threads from July 2024 show teams where AI removed input validation checks-only to have them discovered during a penetration test weeks later. One team lost customer data because the AI replaced a length-check function with a "more efficient" version that didn’t validate at all. The regression tests passed. The security tests didn’t exist.

Atlassian’s 2024 survey found teams using security regression testing had 68% higher confidence in deployment safety. That’s not hype. That’s measurable.

The Future: Where This Is Headed

This isn’t a trend. It’s a requirement. By 2026, 85% of enterprise security budgets will include dedicated funding for AI refactoring validation, up from 29% in 2023, according to IDC.

OWASP just released version 1.2 of its AI Security Testing Guide, adding 12 new test cases. SonarQube now identifies which code paths were affected by AI changes with 92% accuracy. The Linux Foundation’s OpenSSF is building standardized frameworks with Google, Microsoft, and IBM.

And the next leap? AI testing AI. Google’s SECTR project (expected Q2 2025) will auto-generate security regression tests from code changes. GitHub’s Project Shield (in private beta) will verify security equivalence in real time as you refactor. Imagine: you type a prompt, the AI rewrites your code, and before you even commit, it says: "This change breaks role-based access. Here’s why."

Long-term, the best defense is feedback. Anthropic’s December 2024 research showed feeding security regression test results back into AI training models reduced security vulnerabilities in generated code by 63%. The more you test, the smarter the AI gets-at security.

What Happens If You Don’t Do This

You’ll get lucky for a while. Then you won’t.

Organizations without security regression testing for AI refactors face 3.8x higher incident rates, according to 451 Research’s predictive model through 2027. That’s not a guess. It’s math. AI refactors are happening. Every day. In every company. If you’re not testing the security impact, you’re gambling.

Regulators won’t care that you used AI. They’ll care that you exposed customer data. Auditors won’t accept "the AI did it" as a defense. They’ll ask: "What process did you have to catch this?" If you don’t have one, you’re not compliant. You’re exposed.

Security regression testing isn’t about slowing down. It’s about shipping safely. It’s about knowing that every AI-generated line of code didn’t just work-it didn’t break something vital.

What’s the difference between regular regression testing and security regression testing?

Regular regression testing checks if features still work after a code change-like whether a button still submits a form. Security regression testing checks if security rules still hold-like whether a user can still access data they shouldn’t. AI can make code run faster while quietly removing authentication checks. Regular tests won’t catch that. Security tests will.

Can I use my existing SAST tools for AI-refactored code?

Most can’t. Generic SAST tools miss up to 58% of AI-induced vulnerabilities because they look for known patterns, not behavioral changes. Tools like SonarQube 9.9+, Semgrep, and Snyk with AI plugins have updated rules that detect logic shifts, not just code smells. If your tool doesn’t mention AI-specific detection, it’s not enough.

How much extra time does security regression testing add?

It adds about 18-22% to your total regression test runtime. But that’s far less than the cost of a breach-$147,000 on average. You’re also adding 15-20% more test cases, which takes time upfront. But once built, they run automatically. The real time sink is maintaining them as AI tools evolve. Tools like Testim.io’s Smart Maintenance cut that maintenance effort by 55%.

Which industries need this the most?

Finance and healthcare. Both are heavily regulated (PCI-DSS, HIPAA) and handle high-value data. Capital One cut PCI-DSS violations by 92% after implementing this. Healthcare systems using AI to refactor patient records saw a 65% drop in access control breaches. If your industry has compliance rules, you need this.

Is open-source enough, or do I need paid tools?

Open-source tools like OWASP ZAP and Semgrep can work if you have skilled engineers to tune them. But for enterprise-scale AI refactoring, paid tools like Snyk, Checkmarx, or Synopsys Seeker offer automation, integration with CI/CD, and AI-specific detection that saves time and reduces risk. If you’re deploying AI at scale, the ROI on paid tools is clear.

Can AI help generate security regression tests?

Yes, and it’s getting better. Google’s SECTR project (expected Q2 2025) will auto-generate tests from code changes. GitHub’s Project Shield already tests security equivalence during refactoring in private beta. The future isn’t manual test writing-it’s AI that understands security intent and builds tests as you code.

Susannah Greenwood

I'm a technical writer and AI content strategist based in Asheville, where I translate complex machine learning research into clear, useful stories for product teams and curious readers. I also consult on responsible AI guidelines and produce a weekly newsletter on practical AI workflows.

Popular Articles

10 Comments

Write a comment Cancel reply

About

EHGA is the Education Hub for Generative AI, offering clear guides, tutorials, and curated resources for learners and professionals. Explore ethical frameworks, governance insights, and best practices for responsible AI development and deployment. Stay updated with research summaries, tool reviews, and project-based learning paths. Build practical skills in prompt engineering, model evaluation, and MLOps for generative AI.

AI doesn't care about security. It just wants to make code faster. That's the problem. We need to stop treating it like a magic wand and start treating it like a wild animal. One wrong move and boom. Security gone.

Simple. No fluff.

Let me guess. You're one of those people who think AI is the future. Tell me, when did we stop trusting human judgment? When did we hand over the keys to a black box that doesn't even know what 'least privilege' means? This isn't innovation. It's surrender. And now we're paying for it with data breaches, compliance fines, and sleepless nights. The system is rigged. The tools are blind. And the auditors? They're still using 2018 checklists. Wake up.

It's funny, really. We built AI to help us write better code, but we forgot to teach it why security matters. It's like giving a child a sharp knife and saying 'be careful' without explaining what sharp means. Maybe the real issue isn't the AI-it's that we stopped explaining the 'why' to our tools. We optimized for speed, not meaning. And now the code is fast... but hollow.

i just dont trust ai anymore like seriously why do we keep letting it touch our code its like letting a toddler handle a bomb and then asking why it exploded i mean come on we all know the truth the big tech companies are just using this to cut costs and push the blame onto devs like us and now im scared to even touch a git commit because what if the ai just deletes my auth layer again i swear last time it happened i had to redo 3 days of work in one night and my cat was mad at me for not sleeping i miss when code was just code not some black box nightmare

This is what happens when we let Western tech companies run our systems. AI doesn't understand Indian or Asian security culture. It doesn't know what 'trust but verify' means. We built our systems on discipline. They build on convenience. Now we're paying the price. Why not use local tools? Why not train AI on Indian compliance standards? Because they'd rather sell us a $25K tool than admit their code is built on sand. This isn't tech. It's colonialism with a GitHub logo.

i read this whole thing and honestly i think most of it is just fluff. yes ai can mess up security but so can humans. like when i was a junior dev i once removed a validation check because i thought it was redundant. it took a month to find. so why is ai the villain here? also the stats are all from 2024 which is basically yesterday. who even did these studies? and why do we need 15-20% more tests? that just sounds like a way to make consultants rich. also i dont believe in tools that cost 20k a year. my company uses free tools and we're fine. maybe the real problem is overcomplicating things.

I really appreciate how clearly this was laid out. It’s easy to feel overwhelmed when AI starts changing how we work, but this gives us a real path forward. Security regression testing isn’t about slowing down-it’s about building something that lasts. I’ve seen teams skip this because they’re in a hurry, and the cost always comes back. Harder. Bigger. Slower. This is the quiet hero work that keeps systems alive. Keep doing it. We need more of this.

They don't want you to know this but AI is being trained on leaked code from banks and government systems. Every time you use Copilot, you're feeding data into a black box that might be owned by someone who doesn't want you to be secure. The 68% statistic? It's not a bug. It's a feature. They want you vulnerable so they can sell you the fix. Don't believe the hype. Don't trust the tools. Burn the code. Start over. With your hands.

Just a quick note: 'access control logic changed' should be 'access control logic was changed.' Also, 'it's not just about tools. it's about culture.' - missing capitalization on 'it's.' Small things matter. Especially when you're talking about security. And also, '28% of AI-refactored code samples from Snyk’s 2024 study had improper access control issues.' - the apostrophe in Snyk’s is correct, but the rest of the sentence needs a comma before 'had.' Just saying. I care.

As someone from India, I want to say this: we are not just users of these tools. We are builders. I’ve seen AI refactor code in our healthcare system, and yes, it broke a permission check. But we caught it because we built our own security test suite, based on Indian regulatory needs, not American marketing. We didn’t wait for Snyk or SonarQube. We built it ourselves. Local knowledge matters. Cultural context matters. AI doesn’t understand our rules. But we do. And we’re not waiting for permission to fix it.