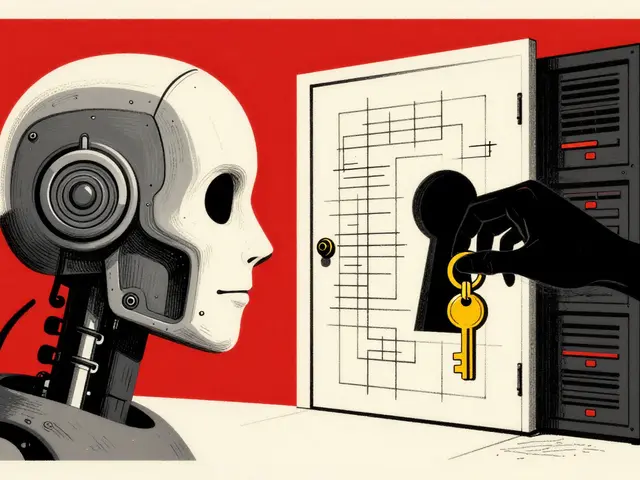

Chain-of-Thought in Vibe Coding: Why Explanations Before Code Work Better

Chain-of-Thought prompting improves AI coding by forcing explanations before code. Learn how asking for step-by-step reasoning cuts bugs, saves time, and is now the industry standard for complex tasks.