Avoiding Proxy Discrimination in LLM-Powered Decision Systems

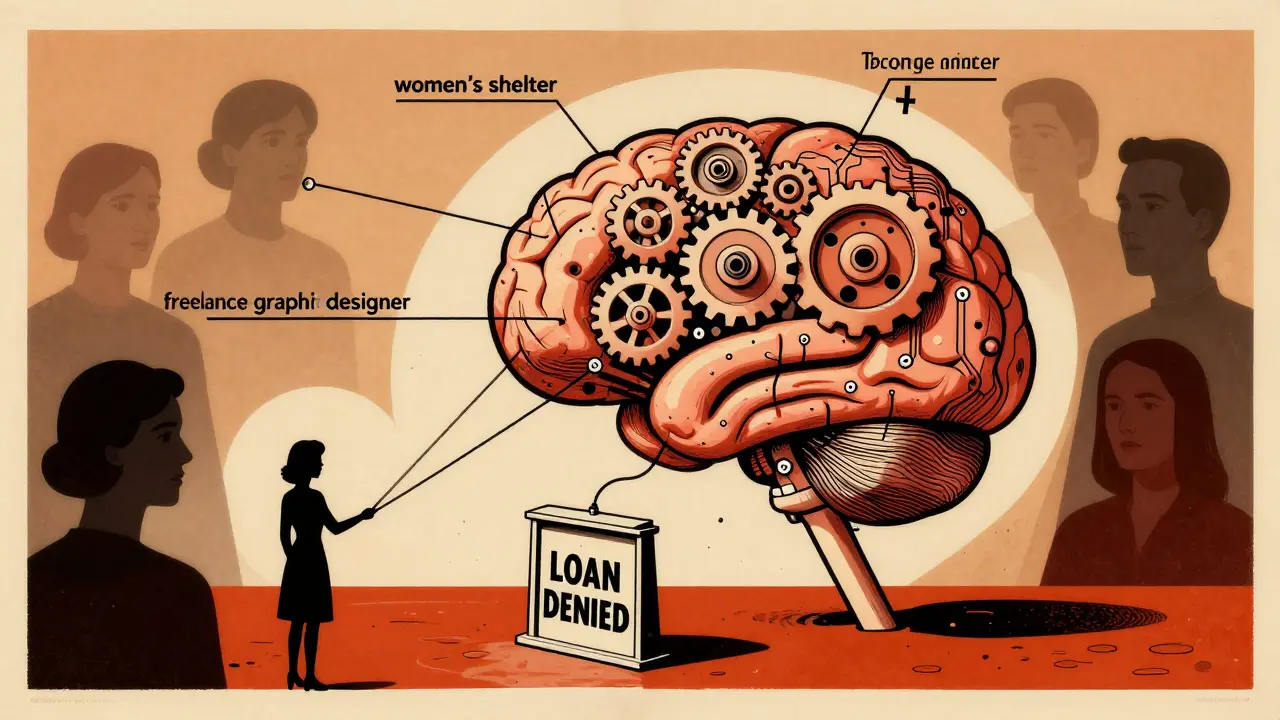

Proxy discrimination in LLM systems hides bias behind seemingly neutral data like zip codes or job titles. Learn how these systems find hidden patterns that unfairly target protected groups-and what organizations can do to stop it.

How to Detect Implicit vs Explicit Bias in Large Language Models

Large language models can appear fair but still harbor hidden biases. Learn how to detect implicit vs explicit bias using proven methods, why bigger models are often more biased, and what companies are doing to fix it.