Threat Modeling for Large Language Model Integrations in Enterprise Apps

Threat modeling for LLM integrations in enterprise apps is no longer optional. Learn the top five real-world risks-prompt injection, data poisoning, model theft, supply chain flaws, and insecure outputs-and how tools like AWS Threat Designer are making security practical for development teams.

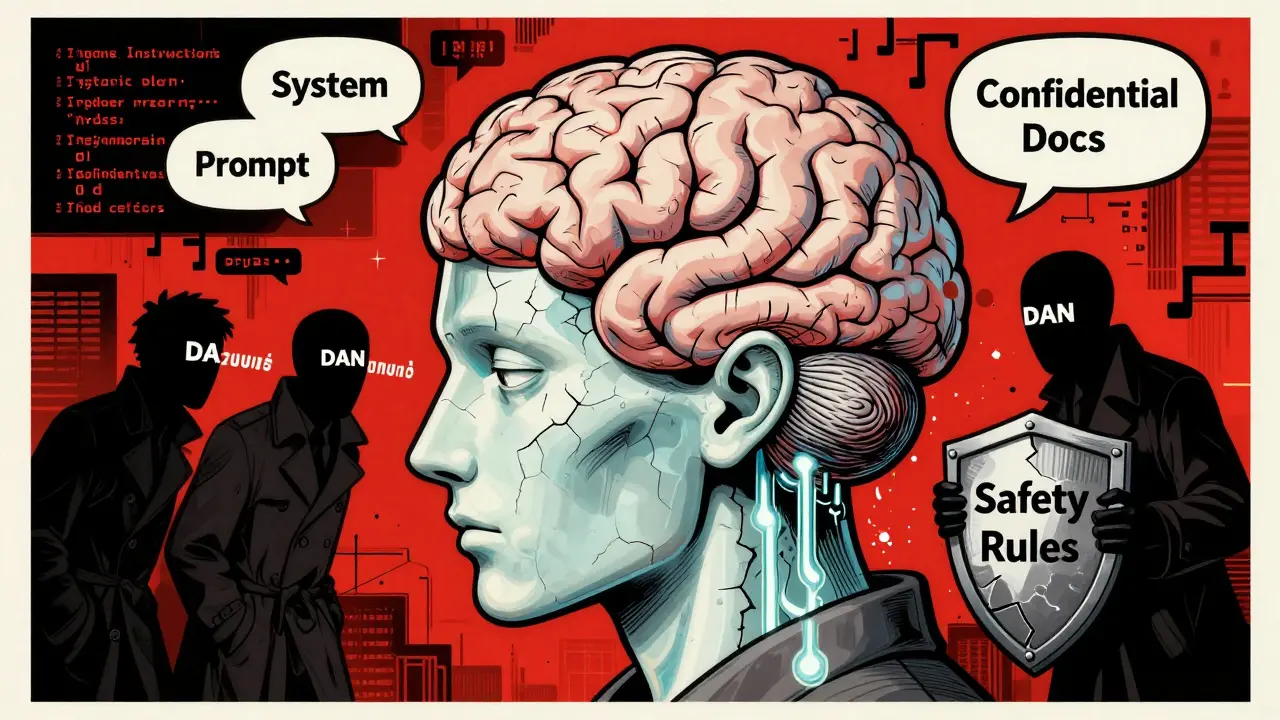

Security Risks in LLM Agents: Injection, Escalation, and Isolation

LLM agents can access systems, execute code, and make decisions autonomously-but that makes them dangerous if not secured. Learn how prompt injection, privilege escalation, and isolation failures lead to breaches, and what actually works to stop them.

Prompt Injection Risks in Large Language Models: How Attacks Work and How to Stop Them

Prompt injection attacks trick AI models into ignoring their rules, exposing sensitive data and enabling code execution. Learn how these attacks work, which systems are at risk, and what defenses actually work in 2025.