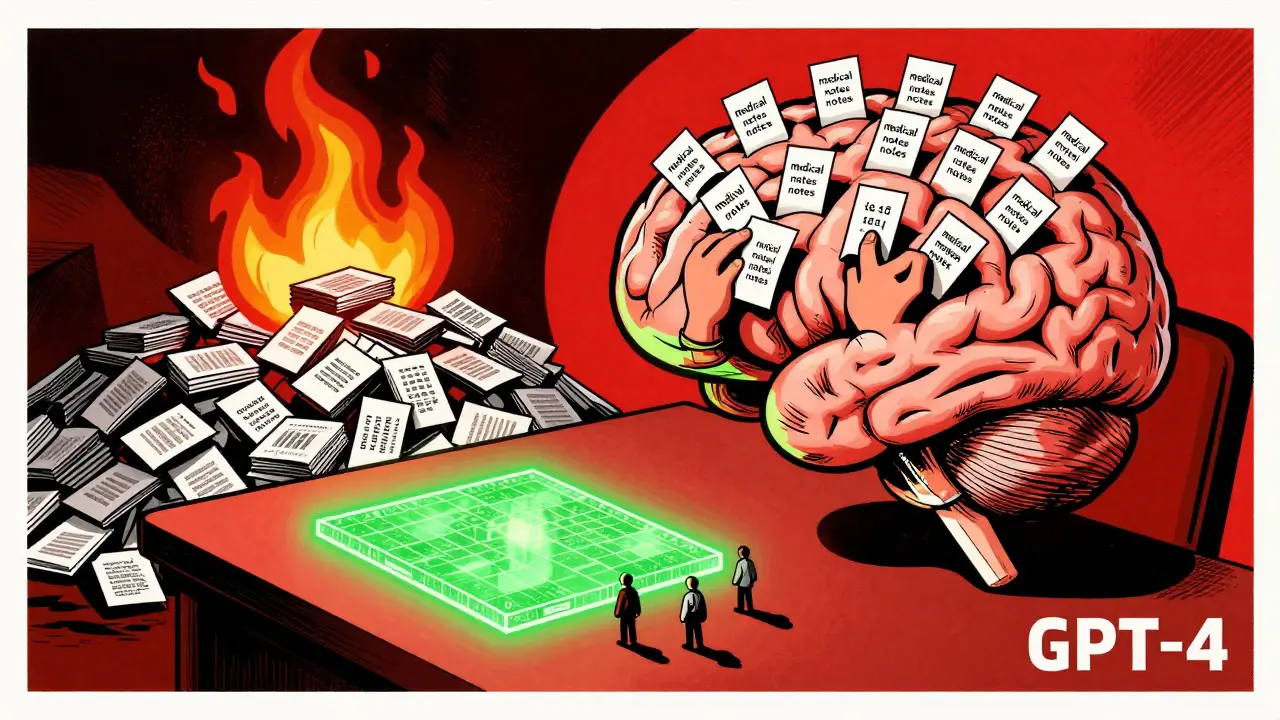

Few-Shot Fine-Tuning of Large Language Models: When Data Is Scarce

Few-shot fine-tuning lets you adapt powerful language models with as few as 50 examples, making AI practical for data-scarce fields like healthcare and legal tech. Learn how LoRA and QLoRA cut costs by 97% and what it really takes to get it right.

How to Reduce Memory Footprint for Hosting Multiple Large Language Models

Learn how to reduce memory footprint for hosting multiple large language models using quantization, model parallelism, and hybrid techniques. Cut costs, run more models on less hardware, and avoid common pitfalls.