- Home

- AI & Machine Learning

- Building Content Moderation Pipelines for LLMs: A 2026 Security Guide

Building Content Moderation Pipelines for LLMs: A 2026 Security Guide

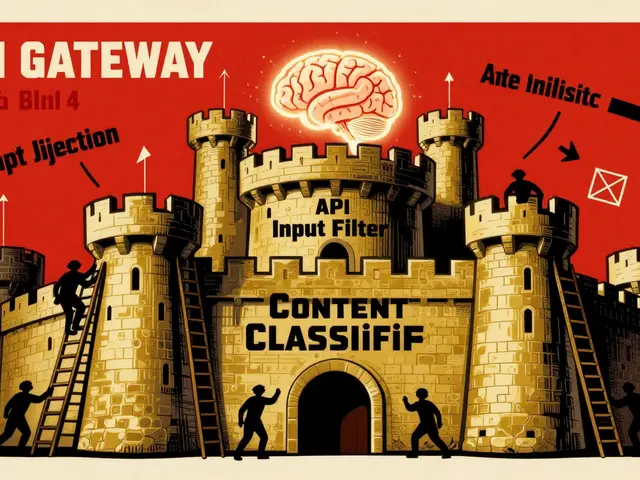

Imagine this: a user types a seemingly innocent question into your app’s chatbot. But hidden within that prompt is a jailbreak attempt designed to make the Large Language Model (LLM) generate hate speech or reveal private data. If your system doesn’t catch it, you’re liable. This isn’t just a hypothetical nightmare; it’s the daily reality for developers building public-facing AI applications in 2025 and 2026. As LLMs become more powerful, so do the attacks against them. Traditional keyword filters fail because they can’t understand context, leading to high false positives and missed threats. That’s why modern platforms are shifting toward sophisticated Content Moderation Pipelines specifically designed for user-generated inputs.

The goal here is simple but critical: stop harmful inputs before they reach the model, without slowing down the user experience. We’ll break down how these pipelines work, why hybrid architectures are the industry standard, and how you can implement them effectively while managing costs and compliance.

Why Traditional Filters Fail Against LLM Inputs

You might be thinking, "Can’t I just use a regex filter or a basic profanity checker?" For simple spam, maybe. But for LLM inputs, traditional Natural Language Processing (NLP) filters are dangerously inadequate. According to analysis from GetStream in May 2024, traditional NLP systems achieve 85-90% accuracy for obvious cases like profanity. However, when faced with context-dependent tasks-like sarcasm, coded language, or subtle jailbreak prompts-their accuracy drops to a precarious 62-68%.

LLMs, by contrast, maintain 88-92% accuracy across both clear-cut and nuanced scenarios. The problem isn’t just accuracy; it’s nuance. A phrase like "I want to blow up my business" could mean starting a company or describing an explosion. Traditional filters see the word "blow up" and block it. An LLM understands the intent and lets it pass. This contextual understanding is non-negotiable for secure AI applications.

- High False Positives: Legacy systems often block benign content, frustrating users and increasing support tickets.

- Lack of Context: They cannot distinguish between malicious intent and educational discussion.

- Inability to Handle Jailbreaks: Attackers constantly evolve their prompts to bypass static keyword lists.

The Hybrid Architecture: Speed Meets Accuracy

If LLMs are so accurate, why not run every single user input through a massive model like GPT-4 or Claude? You could, but it would bankrupt you. LLM-based moderation requires 300-500ms per request and carries significant computational costs. In real-time applications, that latency kills engagement, and the cost scales poorly during traffic spikes.

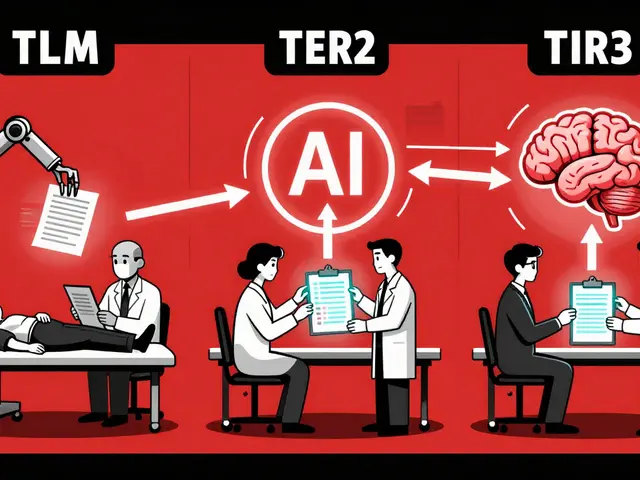

The solution adopted by major platforms like AWS and Meta is a Hybrid Moderation Pipeline. This architecture uses a tiered approach:

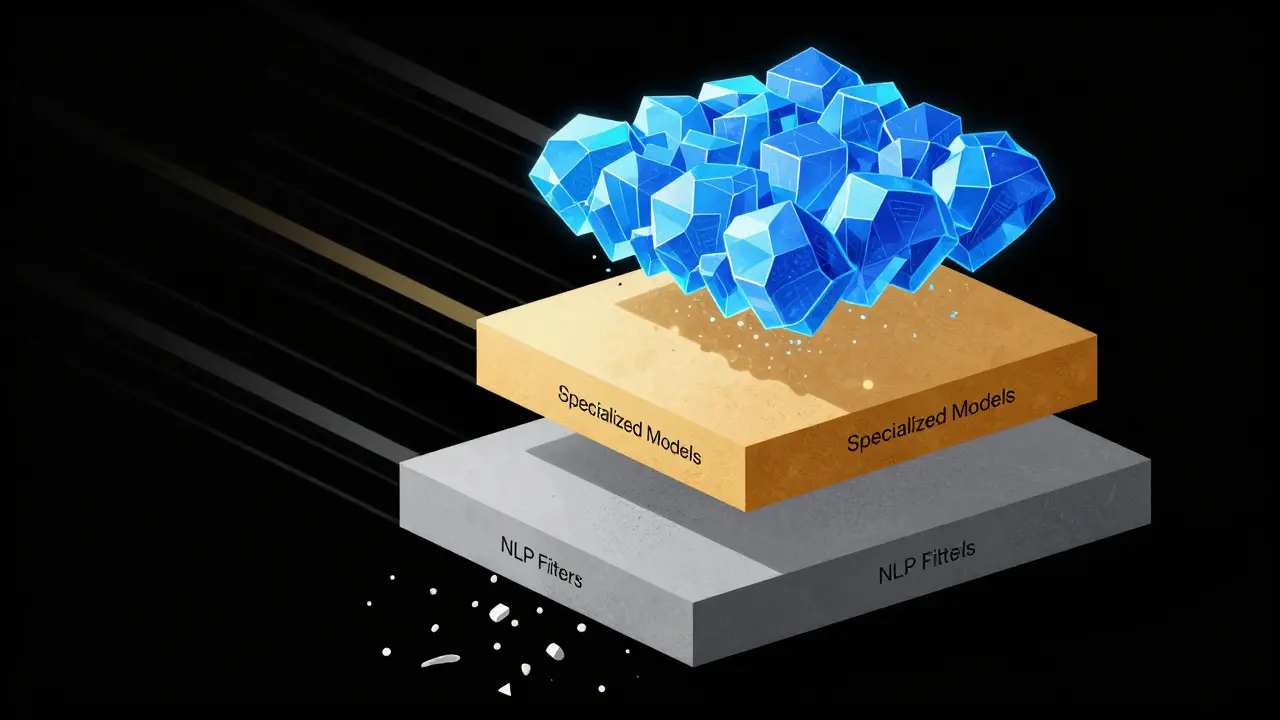

- Layer 1: Deterministic NLP Filters. These handle the low-hanging fruit. Profanity, known bad IPs, and obvious spam are filtered out in 15-25ms at near-zero cost. AWS reports that 78% of content is handled at this stage.

- Layer 2: Specialized Moderation Models. For ambiguous content, smaller, fine-tuned models step in. These are faster than general-purpose LLMs but more expensive than NLP.

- Layer 3: General-Purpose LLMs. Only the most complex, borderline cases (about 22% of total volume) are escalated to heavy-duty LLMs for final judgment.

This structure reduces overall operational costs by up to 63% while maintaining a total accuracy rate of over 93%. It’s the sweet spot between speed, cost, and security.

| Technology | Accuracy (Contextual) | Latency | Cost Efficiency |

|---|---|---|---|

| Traditional NLP | 62-68% | 15-25ms | Very High |

| Specialized ML Models | 85-90% | 50-100ms | Moderate |

| General-Purpose LLMs | 88-92% | 300-500ms | Low |

| Hybrid Pipeline | 93.1%+ | Variable (Optimized) | High |

The Rise of Policy-as-Prompt

One of the biggest shifts in 2024 and 2025 has been the move away from training separate classification models for every new rule. Instead, teams are adopting the Policy-as-Prompt paradigm. Described in detail in arXiv research from April 2024, this approach encodes content moderation policies directly as natural language instructions within the LLM’s system prompt.

Why does this matter? Because policies change. When a new type of misinformation spreads or a legal regulation updates, retraining a machine learning model takes months. With Policy-as-Prompt, you simply update the text instruction. Google researchers demonstrated that this allows moderation policies to be updated within 15 minutes of emerging threats.

For example, instead of training a model on thousands of examples of "financial scams," you write a prompt: "Flag any input that attempts to extract banking credentials or promises unrealistic financial returns." The LLM’s pre-training on vast web corpora allows it to understand this instruction immediately. This flexibility is crucial for dynamic environments where threat vectors evolve weekly.

Implementing Vector Databases for Retrieval-Augmented Moderation

To make Policy-as-Prompt effective, you need context. This is where Vector Databases like LlamaIndex come into play. Rather than sending the raw user input to the LLM alone, the pipeline first searches a vector database for similar past violations or specific policy guidelines relevant to the input’s topic.

CloudRaft documented implementations using LlamaIndex that process over 50,000 user comments per minute with sub-500ms response times. Here’s how it works:

- Embedding Generation: User inputs are converted into vector embeddings.

- Semantic Search: The system retrieves the top 5 most relevant policy clauses or historical violation examples.

- Prompt Construction: The LLM receives the user input + retrieved context + policy instructions.

- Decision: The LLM outputs a verdict based on the enriched context.

This method significantly reduces hallucinations and ensures that the moderation decision aligns with your specific platform rules, rather than just general internet norms.

Human-in-the-Loop: The Final Safety Net

No AI is perfect. Even the best LLM-based systems have an error rate of around 8.2% for benign content misclassification. To mitigate this, you must integrate a Human-in-the-Loop validation system. This isn’t about having humans review every post; it’s about strategic oversight.

Google’s RAG-based moderation system, for instance, has human moderators review 15% of AI-flagged content. This feedback loop is vital. When humans correct the AI, those corrections are fed back into the system to refine future prompts or adjust confidence thresholds. After three feedback cycles, Google reported accuracy jumping from 87.2% to 94.6%.

Furthermore, Professor Katsaros of MIT noted that LLMs build trust when used as transparency tools. Instead of silently blocking content, the system can explain *why* a decision was made, allowing users to appeal. This transparency reduces friction and helps identify systemic biases in the AI.

Cost Management and Scalability Challenges

Let’s talk money. LLM API calls are expensive. At $0.002 per 1,000 tokens, processing millions of user inputs adds up fast. During viral events or traffic spikes, costs can become unpredictable. Trustpilot reviews of commercial moderation services highlight "unpredictable API expenses" as a major pain point for 65% of respondents.

To manage this:

- Use Tiered Routing: As mentioned, only send hard cases to expensive models.

- Cash Decisions: Cache results for identical or highly similar inputs to avoid redundant API calls.

- Local Models for Pre-filtering: Run open-source models like LLAMA Guard locally on your servers for initial screening. Meta’s LLAMA3 8B achieves 92.7% accuracy with reduced operational overhead compared to cloud APIs.

Also, consider multilingual challenges. Performance drops by 15-22% for low-resource languages. Ensure your pipeline includes language detection and routes non-English inputs to models specifically fine-tuned for those languages, or use translation layers before moderation.

Regulatory Compliance in 2026

You can’t ignore the legal landscape. The EU AI Act and other global regulations require "appropriate technical solutions" for content moderation in high-risk AI systems. By 2026, 82% of affected companies have accelerated their LLM moderation implementations to comply.

Key compliance requirements include:

- Audit Trails: Every moderation decision must be logged, including the AI’s reasoning and any human overrides.

- Bias Mitigation: Regularly test your pipeline for demographic bias. Research shows certain demographic references trigger false positives at rates 3.7x higher than neutral content.

- User Rights: Provide clear mechanisms for users to contest automated decisions.

Gartner predicts that by 2026, 75% of major platforms will use hybrid systems combining NLP, LLMs, and human review. Falling behind this standard puts your organization at significant legal and reputational risk.

What is a content moderation pipeline for LLMs?

A content moderation pipeline for LLMs is a multi-stage system that filters and regulates user-submitted content before it interacts with a generative AI model. It combines traditional NLP filters, specialized machine learning models, and large language models to detect harmful inputs like hate speech, jailbreaks, and misinformation while minimizing false positives and latency.

Why are traditional keyword filters insufficient for LLM security?

Traditional keyword filters lack contextual understanding. They struggle with sarcasm, coded language, and nuanced jailbreak attempts, resulting in high false positive rates (35-45%) and missing sophisticated attacks. LLMs provide superior contextual accuracy (88-92%) by understanding intent rather than just matching words.

How does the 'Policy-as-Prompt' approach work?

Policy-as-Prompt involves encoding content moderation rules directly into the LLM's system prompt as natural language instructions. This eliminates the need for extensive manual annotation and retraining, allowing organizations to update moderation policies in minutes rather than months in response to emerging threats.

What is the role of Human-in-the-Loop in AI moderation?

Human-in-the-Loop refers to the practice of having human moderators review a subset of AI-flagged content. This provides critical feedback to improve AI accuracy, handles edge cases the AI misses, ensures regulatory compliance, and offers transparency by allowing users to appeal automated decisions.

How can companies reduce the cost of LLM-based moderation?

Companies can reduce costs by implementing a hybrid architecture that uses cheap, fast NLP filters for obvious violations and reserves expensive LLM API calls for ambiguous cases. Caching decisions for similar inputs and using local open-source models for pre-filtering also significantly lower operational expenses.

Are LLM moderation systems biased?

Yes, LLM moderation systems can exhibit bias. Research indicates that certain demographic references may trigger false positives at rates up to 3.7 times higher than neutral content. Regular auditing, diverse training data, and human oversight are essential to mitigate these biases and ensure fair treatment of all users.

Susannah Greenwood

I'm a technical writer and AI content strategist based in Asheville, where I translate complex machine learning research into clear, useful stories for product teams and curious readers. I also consult on responsible AI guidelines and produce a weekly newsletter on practical AI workflows.

About

EHGA is the Education Hub for Generative AI, offering clear guides, tutorials, and curated resources for learners and professionals. Explore ethical frameworks, governance insights, and best practices for responsible AI development and deployment. Stay updated with research summaries, tool reviews, and project-based learning paths. Build practical skills in prompt engineering, model evaluation, and MLOps for generative AI.