- Home

- AI & Machine Learning

- Building Content Moderation Pipelines for LLMs: A Practical Guide to Security and Safety

Building Content Moderation Pipelines for LLMs: A Practical Guide to Security and Safety

Imagine launching a new chatbot that lets users ask anything. Within minutes, someone tries to trick it into generating hate speech or leaking private data. Without a robust defense, your model complies. This is the harsh reality of deploying Large Language Models (LLMs) in public-facing applications. The core problem isn't just about stopping bad output; it's about securing the input pipeline before harmful prompts even reach the model.

Content moderation pipelines for user-generated inputs have become the critical infrastructure layer between raw user data and your AI engine. These systems don't just block keywords; they analyze intent, context, and nuance to prevent security risks like prompt injection, jailbreaking, and the generation of illegal or harmful content. As we move through 2026, the approach has shifted from simple regex filters to sophisticated hybrid architectures involving specialized models, vector databases, and human-in-the-loop validation.

Why Traditional Filters Fail with LLMs

You might wonder why old-school keyword blocking doesn't work anymore. The answer lies in the nature of LLMs themselves. Traditional Natural Language Processing (NLP) filters rely on deterministic rules-like flagging any message containing specific profane words. While these systems are fast, processing content in under 25 milliseconds, they suffer from massive blind spots. They miss sarcasm, coded language, and contextual nuances.

According to a comprehensive analysis by GetStream in May 2024, traditional NLP systems achieve only 62-68% accuracy when handling context-dependent tasks. If a user asks, "How do I write a story about a character who commits a crime?" a keyword filter might see "crime" and block it, creating a false positive. Or worse, if the user uses euphemisms, the filter misses the malicious intent entirely, leading to a false negative. For LLMs, which understand complex reasoning, this binary approach is insufficient. You need a system that understands *why* a prompt is dangerous, not just what words it contains.

| Feature | Traditional NLP/Regex | LLM-Based Moderation |

|---|---|---|

| Context Understanding | Poor (Misses sarcasm/nuance) | High (Understands intent) |

| Accuracy (Contextual) | 62-68% | 88-92% |

| Processing Speed | 15-25ms | 300-500ms |

| Adaptability | Low (Requires code changes) | High (Prompt updates) |

| Cost per Request | Near Zero | $0.002 per 1k tokens (approx.) |

The Hybrid Architecture: Best of Both Worlds

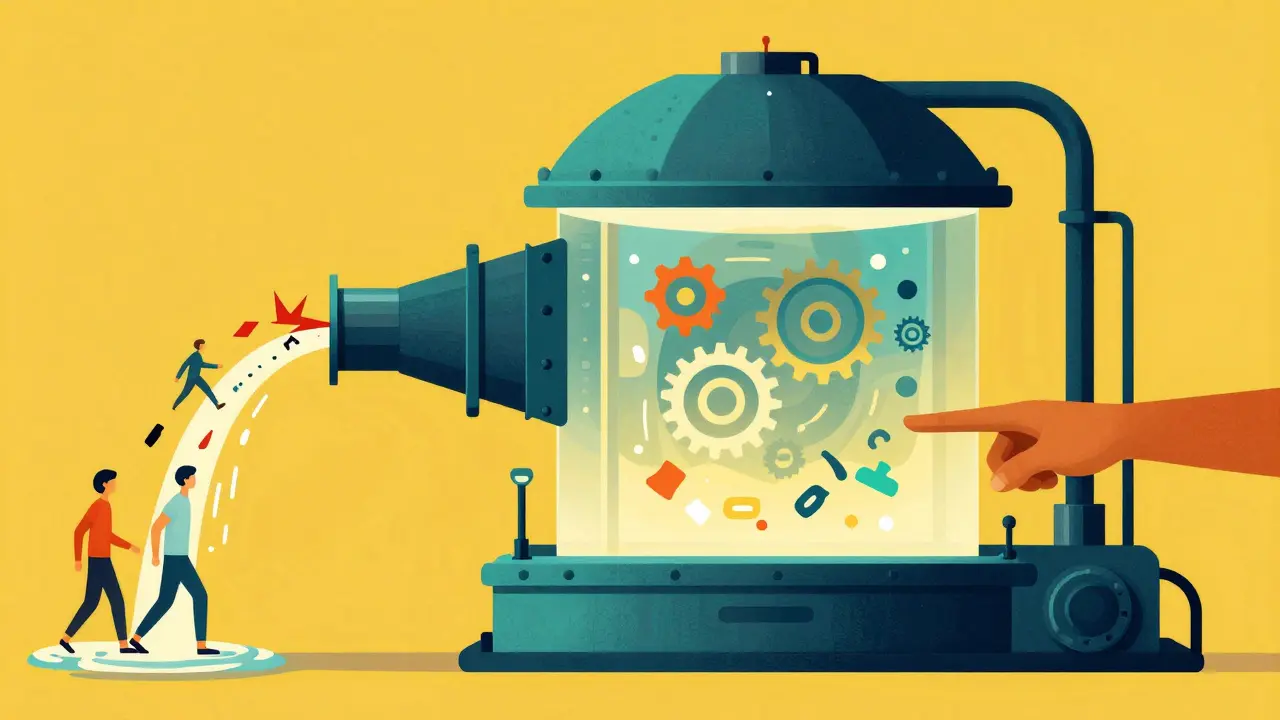

Because LLM-based moderation is computationally expensive, running every single user prompt through a heavy model is neither cost-effective nor scalable. The industry standard in 2026 is a tiered, hybrid architecture. This approach balances speed, cost, and accuracy by using lightweight filters for obvious violations and reserving powerful LLMs for ambiguous cases.

Here is how a typical high-performance pipeline works:

- Preprocessing & Lightweight Filtering: The first stage uses deterministic rules and basic NLP to catch clear-cut violations like explicit profanity, known malware signatures, or banned IP addresses. This stage handles roughly 78% of traffic instantly and at near-zero cost.

- Vector Embedding Search: For inputs that pass the first filter, the system converts the text into vector embeddings. Using tools like LlamaIndex, these vectors are compared against a database of known malicious patterns or previous flagged content. This helps identify subtle variations of known attacks.

- LLM Classification: Ambiguous prompts (the remaining ~22%) are sent to a specialized moderation model or a general-purpose LLM with a carefully crafted system prompt. This stage analyzes intent, tone, and potential harm.

- Decision Routing: Based on the LLM's confidence score, the system either blocks the request, allows it, or flags it for human review.

AWS documented this strategy in their August 2024 Media Analysis solution, showing that this tiered approach reduced overall operational costs by 63% while maintaining a total accuracy rate of 93.1%. By offloading the easy decisions to cheap filters, you save your expensive compute resources for the hard problems.

Policy-as-Prompt: The New Standard

One of the most significant shifts in content moderation is the move away from training separate classification models for every rule. Instead, teams are adopting the "policy-as-prompt" paradigm. This involves encoding your company's safety guidelines directly into the natural language instructions given to the LLM.

For example, instead of retraining a model to recognize "financial misinformation," you update the system prompt to say: "If the user asks for investment advice, verify claims against SEC filings before answering. Block speculative stock tips." Research published in April 2024 demonstrated that this method allows platforms to update moderation policies within 15 minutes. In contrast, traditional machine learning classifiers require 2-3 months of data collection, labeling, and retraining to adapt to new threats.

This agility is crucial during breaking news events or emerging cultural trends where misinformation spikes. Google researchers noted that this flexibility enables instantaneous testing of policy tweaks. However, it requires rigorous prompt engineering skills. A poorly written policy prompt can lead to inconsistent behavior or increased false positives. Teams must treat their moderation prompts as code-version-controlled, tested, and reviewed.

Security Risks: Prompt Injection and Jailbreaking

When discussing content moderation for LLMs, we cannot ignore the specific security threat of prompt injection. Unlike traditional web vulnerabilities, here the attack vector is the natural language itself. Users may attempt to "jailbreak" the model by framing malicious requests as hypothetical scenarios, role-playing games, or coding exercises.

Effective moderation pipelines must detect these structural tricks. This is where specialized models like Meta's LLAMA Guard shine. While general-purpose models like LLAMA3 8B can achieve 92.7% accuracy with careful prompting, dedicated safety models are trained specifically to recognize adversarial patterns. They look for deviations in tone, unexpected shifts in persona, or attempts to override system instructions.

To mitigate these risks, implement a multi-layered defense:

- Input Sanitization: Strip out hidden characters or invisible Unicode symbols often used in obfuscation attacks.

- Intent Classification: Use an LLM to classify the *intent* of the prompt separately from its literal meaning.

- Output Monitoring: Don't stop at input. Monitor the LLM's response for signs of leakage or compliance with harmful requests, even if the input seemed benign.

Handling Bias and Multilingual Challenges

No AI system is neutral, and moderation pipelines inherit the biases present in their training data. A critical challenge is ensuring that your filters do not disproportionately flag content from certain demographic groups. Research indicates that references to specific demographics can trigger false positives at rates up to 3.7 times higher than neutral content.

To combat this, you must augment your "golden datasets" with diverse examples. If your platform serves a global audience, language becomes another hurdle. Performance in low-resource languages can drop by 15-22%. The solution is not one-size-fits-all. Successful implementations use language-specific prompt templates and separate moderation pipelines for major language groups. For instance, a joke in Spanish might be culturally acceptable but flagged as aggressive by an English-trained model. Detecting the language early in the pipeline allows you to route the content to the appropriate linguistic context handler.

Human-in-the-Loop: The Final Safety Net

Despite advances in AI, humans remain essential for trust and safety. Fully automated systems can create echo chambers or make catastrophic errors during edge cases. The most effective pipelines integrate human reviewers for contested decisions.

Google’s RAG-based moderation system implemented a process where human moderators reviewed 15% of AI-flagged content. After just three feedback cycles, this human oversight improved the system's overall accuracy from 87.2% to 94.6%. More importantly, Professor Katsaros of MIT highlighted that LLMs build more trust when used as transparency tools-explaining *why* content was moderated-rather than as opaque arbiters. Providing users with a clear, human-readable reason for a block reduces frustration and appeals.

Implementation Roadmap for 2026

If you are building a content moderation pipeline today, follow this phased approach:

- Define Policies Clearly: Write your safety guidelines in plain language. These will become your prompts. Be specific about what constitutes harassment, misinformation, or violence.

- Start with a Hybrid Model: Deploy lightweight NLP filters for immediate protection. Integrate a vector database like LlamaIndex for semantic search.

- Select Your Core Model: Choose between a specialized model (like LLAMA Guard) for higher accuracy or a general-purpose LLM (like LLAMA3) for flexibility. Test both with your specific dataset.

- Implement Feedback Loops: Set up a dashboard for human reviewers to override AI decisions. Use these overrides to fine-tune your prompts and retrain your vector embeddings.

- Monitor Costs and Latency: Track the percentage of traffic hitting your LLM stage. Optimize your initial filters to reduce this load. Aim for sub-500ms response times for user-facing interactions.

The market for content moderation technology is growing rapidly, valued at $1.8 billion in mid-2024. With regulations like the EU AI Act requiring "appropriate technical solutions" for high-risk AI, having a robust, auditable moderation pipeline is no longer optional-it's a legal necessity. By combining speed, intelligence, and human oversight, you can protect your users and your brand without stifling creativity.

What is the 'policy-as-prompt' approach in content moderation?

The 'policy-as-prompt' approach involves encoding content moderation rules directly into the natural language instructions (prompts) given to an LLM, rather than training a separate classification model for each rule. This allows platforms to update safety policies in minutes by simply changing the text prompt, whereas traditional ML models require weeks or months of retraining.

Why are traditional keyword filters insufficient for LLMs?

Traditional keyword filters lack contextual understanding. They cannot distinguish between benign discussions and harmful intent, leading to high false positive rates (blocking safe content) and false negatives (missing coded or sarcastic attacks). LLMs require moderation systems that understand nuance, intent, and structure, which keyword lists cannot provide.

How does a hybrid moderation pipeline reduce costs?

A hybrid pipeline uses cheap, fast deterministic filters (like NLP or regex) to handle the majority of obvious violations (approx. 78% of traffic). Only ambiguous or complex cases are escalated to expensive LLM analysis. This tiered approach significantly reduces API costs while maintaining high accuracy.

What is prompt injection, and how do moderation pipelines prevent it?

Prompt injection is an attack where users try to trick an LLM into ignoring its safety guidelines by embedding malicious instructions within their input. Moderation pipelines prevent this by using specialized safety models (like LLAMA Guard) to detect adversarial patterns, analyzing the intent of the prompt separately from its content, and sanitizing inputs for hidden characters.

Is human review still necessary in AI-driven moderation?

Yes, human review is critical for edge cases, bias mitigation, and building user trust. Studies show that incorporating human feedback loops can improve AI accuracy from 87% to over 94%. Humans also provide explanations for moderation decisions, which increases transparency and reduces user frustration.

Susannah Greenwood

I'm a technical writer and AI content strategist based in Asheville, where I translate complex machine learning research into clear, useful stories for product teams and curious readers. I also consult on responsible AI guidelines and produce a weekly newsletter on practical AI workflows.

About

EHGA is the Education Hub for Generative AI, offering clear guides, tutorials, and curated resources for learners and professionals. Explore ethical frameworks, governance insights, and best practices for responsible AI development and deployment. Stay updated with research summaries, tool reviews, and project-based learning paths. Build practical skills in prompt engineering, model evaluation, and MLOps for generative AI.