- Home

- AI & Machine Learning

- Legal Counsel Playbook for Generative AI: Priorities, Checklists, and Training

Legal Counsel Playbook for Generative AI: Priorities, Checklists, and Training

When your legal team spends 4.5 hours a day just reviewing contracts, something has to change. That’s not a hypothetical-it’s the reality for most in-house counsel today. Generative AI isn’t coming to the legal department; it’s already here. The question isn’t whether to use it, but how to use it right. A legal counsel playbook for generative AI is no longer optional. It’s the difference between efficient scaling and costly mistakes.

Why Playbooks Matter More Than Ever

Think of a legal playbook as your organization’s institutional memory turned into machine-readable rules. It’s not just software. It’s the codified way your company handles NDAs, vendor agreements, employment contracts, and IP licenses. Without a playbook, every lawyer reviews documents differently. One attorney might push hard on liability caps. Another might accept them without question. That inconsistency creates risk.

Generative AI changes that. When trained on your actual contracts, past negotiations, and internal guidelines, it applies your standards consistently-every time. A new hire on day one can review an NDA with the same precision as your general counsel. That’s not magic. It’s system design.

According to Dioptra.ai’s 2025 data, teams using AI playbooks cut contract review time by up to 50% within six months. But here’s the catch: the AI doesn’t think. It doesn’t judge. It just follows the rules you feed it. If your playbook is outdated, incomplete, or poorly written, the AI will make mistakes. And those mistakes can cost you millions.

The Core Components of a Legal AI Playbook

A strong playbook has four non-negotiable parts:

- Training Data - Your own contracts, precedents, and internal guidance. Not generic templates. Not public examples. Your real documents from the last 12-24 months. This includes signed NDAs, vendor agreements, software licenses, and even emails where legal teams negotiated changes.

- Decision Rules - Clear, unambiguous instructions. For example: “If the vendor requests unlimited liability, flag it. Offer a cap at 1.5x the contract value. If they refuse, escalate to the CFO.”

- Redlining Logic - How the AI should suggest edits. Should it highlight changes? Propose alternatives? Insert commentary? This must match your team’s workflow. Tools like Microsoft Word and CLM platforms (e.g., Icertis, Conga) need to integrate seamlessly.

- Escalation Triggers - When does the AI say, “I can’t handle this”? High-risk clauses, novel jurisdictions, regulatory changes, or unique deal structures should auto-flag for human review.

These aren’t theoretical. One Fortune 500 tech company reduced NDA review time from 90 minutes to 15 minutes after building their playbook. Zero errors in six months. That’s not luck. That’s structure.

Top Three Priorities for Legal Teams

Before you train your AI, focus on these three priorities. Skip any one, and you risk failure.

1. Data Quality Over Quantity

Garbage in, garbage out. If your training data includes outdated templates, inconsistent language, or poorly negotiated clauses, your AI will learn those flaws. Start by cleaning your contract library. Remove duplicates. Flag versions that were never signed. Pull out examples where legal overruled business requests-that’s your real policy.

Dioptra.ai recommends using at least 12 months of executed contracts. That captures seasonal variations-like how Q4 vendor agreements often have different payment terms than Q1 ones.

2. Governance, Not Just Technology

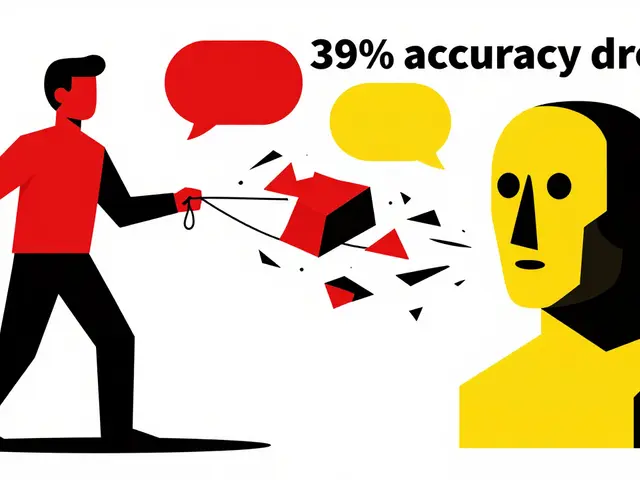

AI isn’t a tool you install. It’s a process you own. The Association of Corporate Counsel (ACC) says 82% of legal leaders fear “hallucinations” and accuracy drift. That’s because AI doesn’t know when it’s wrong. It only knows what it was trained on.

Build a governance committee. Include legal ops, compliance, risk, and at least one senior attorney. Meet monthly. Review AI outputs. Update rules. Track false positives. If the AI flags a clause that’s actually acceptable, adjust the rule. If it misses a red flag, add it.

Professor Renee Knake Jefferson calls these playbooks “living documents.” They evolve with laws, business strategy, and even internal culture.

3. Training the Team, Not Just the AI

Many teams assume the AI does the work. It doesn’t. It needs oversight. Paralegals need 20-25 hours of training to understand how to validate outputs. Attorneys need 8-12 hours to interpret flagged items. Legal ops staff need 15-20 hours to manage the system.

Don’t skip this. One healthcare legal director told Capterra: “We thought we could just plug in the AI and go. It took four months and three iterations before we got reliable results. We didn’t train the team-we trained the tool.”

Checklist: Build Your Playbook in 6 Steps

Here’s how to get started. This isn’t theoretical. It’s based on real implementations from companies using Gavel, Dioptra, and Pramata.

- Inventory your contracts - Pull all executed agreements from the last 18 months. Filter by type: NDA, vendor, employment, software license.

- Identify your top 5 contract types - Focus on volume, not complexity. NDAs, standard vendor agreements, and SaaS contracts are low-hanging fruit.

- Extract your rules - For each contract type, list: What clauses are non-negotiable? What’s your fallback position? What gets escalated?

- Build the training dataset - Upload 50-100 clean examples of each contract type. Include redlines and negotiation history if available.

- Test and refine - Run the AI against 20 unseen contracts. Review every suggestion. Adjust rules. Repeat until false positives are under 10%.

- Roll out and train - Start with legal ops and junior attorneys. Then expand. Document every change. Keep a version history.

Companies that follow this process see 70-80% of routine contract reviews handled without human intervention within three months.

Training Your Team: Who Needs What

Not everyone needs the same training. Tailor it.

- Legal Operations Staff - They manage the system. Train them on data hygiene, version control, and monitoring AI performance. 15-20 hours.

- Attorneys - They validate outputs and handle exceptions. Focus on interpreting AI suggestions, recognizing hallucinations, and updating rules. 8-12 hours.

- Paralegals & Contract Specialists - They do the bulk of initial reviews. Teach them how to spot when the AI is unsure. 20-25 hours.

- Business Stakeholders - Sales, procurement, HR. Give them a 1-hour overview: “This tool helps us get contracts faster. It doesn’t replace legal judgment.”

One company reduced onboarding time for new attorneys from six months to two days by using the AI playbook as their training manual. New hires didn’t guess what was acceptable-they saw the playbook in action.

What Not to Do

Here are the three biggest mistakes legal teams make:

- Using public templates - Training on generic NDA samples from the internet? You’ll get generic advice. Your playbook must reflect your company’s actual risk tolerance.

- Ignoring data privacy - The IAPP found 73% of legal AI implementations failed to address data residency. If you’re training on EU contracts, make sure the AI doesn’t store data outside GDPR-compliant zones.

- Thinking it’s set-and-forget - Laws change. Negotiation tactics shift. Your playbook must evolve. Treat it like your employee handbook-not a static PDF, but a living policy.

The Future Is AI-Assisted, Not AI-Replace

Gartner predicts that by 2027, 85% of routine legal review tasks will be AI-assisted. That doesn’t mean lawyers are out of a job. It means they’re freed from repetitive work to focus on what matters: strategic advice, regulatory shifts, and high-stakes negotiations.

AI doesn’t remove the lawyer from the equation. It amplifies them.

The most successful legal departments aren’t those with the fanciest AI. They’re the ones that treated their playbook as a knowledge system-not a shortcut. They trained their people. They updated their rules. They built trust.

If you’re still wondering whether to build a playbook, ask yourself: Are you ready to stop spending 4.5 hours a day on contracts you’ve seen a hundred times before?

What’s the difference between a legal AI playbook and a general AI tool?

A general AI tool, like ChatGPT, gives generic answers based on public data. A legal AI playbook is trained exclusively on your organization’s contracts, policies, and past negotiations. It doesn’t guess-it applies your exact standards. For example, while ChatGPT might suggest a standard liability cap, your playbook knows your company only accepts caps at 1.5x contract value, and why.

Can generative AI replace in-house counsel?

No-and no reputable legal tech provider claims it can. AI handles routine, repetitive tasks like reviewing NDAs or flagging inconsistent clauses. But it can’t interpret ambiguous regulations, negotiate complex deals, or advise on emerging legal risks. Human judgment is still essential. The goal isn’t replacement-it’s augmentation.

How long does it take to build a legal AI playbook?

The initial build takes 80-120 hours of senior legal staff time, spread over 6-10 weeks. That includes cleaning data, defining rules, and testing outputs. Some teams start with one contract type (like NDAs) and expand later. Pre-built templates for common agreements can cut that time by 30-40%.

What are the biggest risks of using AI in legal work?

The top three risks are: hallucinations (AI making up legal arguments), data privacy violations (storing sensitive contracts on non-compliant servers), and over-reliance (assuming the AI is always right). Mitigate them by validating outputs, using secure, compliant platforms, and keeping human oversight at every stage.

Do we need to update our playbook regularly?

Yes. Laws change. Court rulings shift interpretations. Your negotiation stance evolves. A playbook that worked in 2024 may be outdated in 2026. Set a quarterly review. Track which clauses get flagged most often-that’s where your rules need tuning.

Next Steps

If you’re just starting: Pick one contract type. Start with NDAs. They’re simple, high-volume, and low-risk. Build your playbook around them. Test it. Refine it. Then expand.

If you’re already using AI: Audit your outputs. How many times did the AI miss a red flag? How often did it flag something that was fine? Use that data to improve your rules.

Legal teams that treat AI as a partner-not a replacement-don’t just save time. They reduce risk, improve consistency, and free up talent for work that actually moves the business forward.

Susannah Greenwood

I'm a technical writer and AI content strategist based in Asheville, where I translate complex machine learning research into clear, useful stories for product teams and curious readers. I also consult on responsible AI guidelines and produce a weekly newsletter on practical AI workflows.

8 Comments

Write a comment Cancel reply

About

EHGA is the Education Hub for Generative AI, offering clear guides, tutorials, and curated resources for learners and professionals. Explore ethical frameworks, governance insights, and best practices for responsible AI development and deployment. Stay updated with research summaries, tool reviews, and project-based learning paths. Build practical skills in prompt engineering, model evaluation, and MLOps for generative AI.

lol i just pasted a contract into chatgpt and it said the liability clause was fine but my boss yelled at me for 20 mins. ai ain’t smart yet.

How quaint. You treat legal work like a spreadsheet to be optimized. The law is not a set of algorithmic rules-it’s a living, breathing, contradictory mess of precedent, power, and human frailty. You can’t ‘train’ AI on contracts and expect it to understand why a CFO once accepted unlimited liability because his brother-in-law runs the vendor. That’s not governance-that’s delusion dressed in SaaS branding.

And please, spare me the ‘Fortune 500 success stories.’ Those are PR brochures written by consultants who’ve never seen a courtroom. Real legal risk doesn’t live in NDAs-it lives in the silent gaps between clauses, in the unspoken cultural norms of negotiation, in the power dynamics no dataset can capture.

You’re not building a playbook. You’re building a cage for your lawyers so they stop thinking. And when the AI misses a subtle jurisdictional trap in a Dubai cloud contract? You’ll be the one explaining to the board why your ‘efficiency’ cost you $200M.

Okay, so you're telling me I need to spend 120 hours cleaning up contracts... before I even get to the AI? That's like, the definition of a dumpster fire. I'm already drowning in emails. Why can't the AI just learn from the ones that got signed? Why do I need to be the contract janitor?

Also, 'escalation triggers'? What's next? A flowchart with a flowchart? I just want to sign stuff and go home. Why does this feel like a corporate cult?

And why is everyone acting like this is new? We've had contract templates since the 90s. This is just Excel with a fancy name.

AI playbooks? More like AI nightmares. You think you’re automating efficiency but you’re just outsourcing your ethical responsibility to a black box trained on your worst legal decisions.

Let’s be real: the moment you let AI redline a clause without human context, you’ve already lost. It doesn’t care if the vendor is a startup that’s about to collapse. It doesn’t know your company’s reputation hinges on this deal. It doesn’t smell the desperation.

And the ‘governance committee’? Please. That’s just a room full of people nodding while the real power sits in procurement and sales. They’ll override the AI anyway. So why build it? Just to feel like you’re doing something?

And the training hours? You’re asking paralegals to become AI whisperers while you sip your $12 oat milk latte. This isn’t innovation. It’s burnout with a PowerPoint.

This is actually really helpful. I’ve been struggling to explain to my team why we can’t just use generic templates. The part about using real signed contracts instead of internet samples made me realize we’ve been doing it wrong for years.

Starting with NDAs is smart. We’ve got over 300 a month. If we can cut that down to 15 minutes each, we’ll save 75 hours a week. That’s enough time to actually help with compliance audits instead of just stamping papers.

Also, the emphasis on training the team-not just the tool-is spot on. I’ve seen too many teams think AI will do the work. It won’t. It’ll just make mistakes faster.

Keep building. This is the future.

What is a legal playbook, really? Is it a set of rules? Or is it a mirror of organizational power? Who decides what’s ‘non-negotiable’? Who gets to define ‘risk tolerance’? Is it the lawyers? The CFO? The board?

When we train AI on our past contracts, we’re not encoding wisdom-we’re encoding bias. The clauses we accepted because we were tired. The redlines we ignored because the vendor was a friend. The exceptions we made for the CEO’s cousin.

AI doesn’t judge. But it doesn’t forgive either. It freezes our past mistakes into immutable logic. And then we call it ‘consistency’.

Maybe the real question isn’t how to build a playbook… but whether we should be building one at all. Or if we’re just afraid of the messy, human work that legal judgment actually requires.

4.5 hours a day on contracts? Bro, just hire a temp. Or pay someone $20/hour to read them. This whole AI playbook thing is just corporate theater. You’re not saving time-you’re adding layers of complexity.

‘Governance committee’? ‘Escalation triggers’? ‘Redlining logic’? Sounds like someone got fired and wrote a book to feel important.

I’ve been doing this for 15 years. We don’t need AI. We need better HR. Stop overcomplicating it.

I’ve seen teams do this right-and I’ve seen teams do it catastrophically wrong. The difference? Culture. If you treat this as a technical project, it fails. If you treat it as a cultural shift-where every person, from paralegal to GC, feels ownership over the rules-it transforms.

One of our teams started by inviting junior attorneys to co-write the rules. Not just review them. Write them. Suddenly, the AI started making suggestions that felt… human. Because they were built by humans.

And yes, it takes time. But that’s the point. This isn’t about speed. It’s about trust. Trust that the system reflects who we are, not who we wish we were.

Don’t just build a playbook. Build a practice. And then let the AI be the quiet assistant-not the boss.