- Home

- AI & Machine Learning

- Benchmarking Open-Source LLMs vs Managed Models for Real-World Tasks

Benchmarking Open-Source LLMs vs Managed Models for Real-World Tasks

When you need an AI model to write code, answer questions, or process sensitive data, you have two real choices: use an open-source model you run yourself, or plug into a managed API like OpenAI or Anthropic. It’s not about which one is "better." It’s about which one fits your team, your budget, and your data. In 2026, the performance gap has shrunk. But the trade-offs? They’re wider than ever.

Performance: Open-Source Models Are No Longer Behind

Two years ago, open-source models like Llama 2 were noticeably weaker than GPT-4. Today? Not so much. Meta’s Llama 3.1 405B matches GPT-4 on general knowledge, math, and reasoning benchmarks. DeepSeek V3.2 hits 1460 Elo on LMArena-just 41 points below Gemini Pro. For most everyday tasks-summarizing documents, answering FAQs, drafting emails-there’s no visible difference.

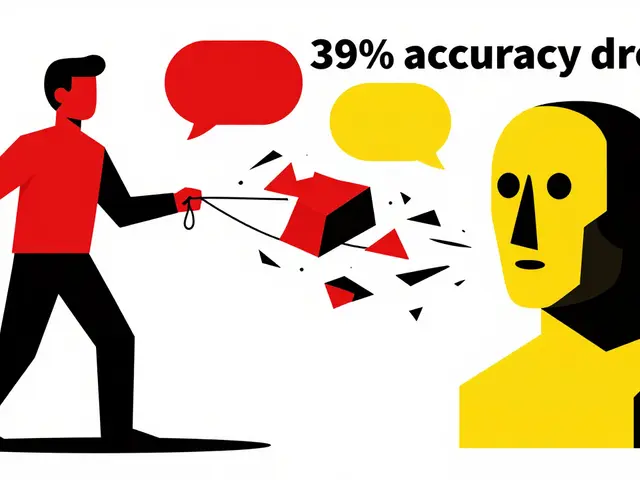

But look closer, and the gaps show up. On Codeforces, where models tackle competitive programming challenges, closed models score 2727 Elo. Open models? 2029. That’s a 698-point gap. On SWE-bench Verified-real-world code bug fixes-closed models fix 71.7% of issues. Open models manage 49.2%. That’s not a small difference. That’s the difference between a tool that helps and one that leaves you debugging its mistakes.

Latency matters too. OpenAI’s o3 completes complex reasoning tasks in 27 seconds. DeepSeek R1 on the same hardware? About 1 minute 45 seconds. Why? Because companies like OpenAI spend millions optimizing their inference pipelines-custom hardware, model compression, caching layers. Most teams don’t have that kind of engineering muscle.

Cost: Open-Source Wins on Volume, But Not on Setup

If you’re processing 10 million tokens a month, open-source models are a no-brainer. Llama-3-70B costs roughly $0.60 per million input tokens and $0.70 per million output tokens. Compare that to GPT-4o at $10 and $30. That’s a 95% drop in cost.

But here’s the catch: that $0.60 doesn’t include the $100,000+ server rack with 8 NVIDIA A100s. Or the two ML engineers you need to hire to keep it running. Or the power bill, cooling, monitoring tools, and security patches. Managed models? You pay $10 per million tokens-no hardware, no staff, no maintenance.

For startups or teams with no infrastructure team, the API is cheaper. For enterprises running millions of queries daily, self-hosting saves millions. It’s not about per-token price. It’s about total cost of ownership.

Control: Who Owns Your Data?

If you work in healthcare, finance, or government, this isn’t optional. Open-source models let you run everything on your own servers. No data leaves your network. You control the model, the logs, the audit trails. That’s why banks and hospitals are moving to Llama 3.1 and Mistral models.

Managed models? Your prompts, your documents, your customer data-all get sent to a third-party server. Even if they claim it’s not stored, you’re still trusting them. For regulated industries, that’s a legal risk. Open-source gives you compliance by design.

And customization? Open models let you fine-tune them on your internal docs, your jargon, your workflows. You can tweak the architecture, add custom layers, even rebuild parts of the model. Managed models? You get prompt engineering and RAG. That’s it. You’re stuck with what the vendor gives you.

Operations: Plug-and-Play vs. Full-Time Job

Want to get started today? Use an API. Sign up. Get an API key. Make a POST request. Done. In 10 minutes, you’re live.

Deploying Llama 3.1? You need GPU clusters, quantization, load balancing, auto-scaling, monitoring, and someone who knows how to update a 405B-parameter model without crashing your whole system. Mistral models are easier, but still require serious infrastructure knowledge.

Managed models handle updates, security patches, scaling during traffic spikes, and global latency optimization-all automatically. Open-source? You do it all. One missed update, one misconfigured router, one overheated GPU, and your service goes down. For teams without MLOps expertise, the API is the only sane choice.

Vendor Lock-In: Freedom vs. Convenience

Open-source models are free to use, modify, and distribute. No license fees. No usage caps. No surprise price hikes. You’re not tied to anyone.

Managed models? You’re locked in. If OpenAI changes pricing, you pay. If they deprecate a model, you rebuild. If their API goes down, your app breaks. You have zero control.

But here’s the flip side: managed models evolve fast. GPT-4o, Claude 3.5 Sonnet, Gemini Pro-they get better every few months. Open-source models improve too, but slower. Community-driven development means you wait for someone else to optimize. Managed models get updates pushed server-side. You don’t lift a finger.

Which One Should You Use?

Choose open-source if:

- You handle sensitive data (HIPAA, GDPR, financial records)

- You process over 5 million tokens/month

- You have ML engineers or can hire them

- You need to fine-tune the model for your domain

- You want to avoid vendor dependency

Choose managed if:

- You need peak performance on coding or complex reasoning

- You have no infrastructure team

- You want to deploy in hours, not weeks

- You’re okay with third-party data handling

- You’re building a prototype or MVP

There’s no single right answer. A company might use GPT-4o for customer support chatbots (because speed and reliability matter) and Llama 3.1 for internal document processing (because data privacy and cost do). It’s not either/or. It’s both/and.

The Future Is Bimodal

In 2024, open-source LLMs were the underdogs. In 2026, they’re a legitimate alternative. The frontier models are nearly equal. The difference now is in how you deploy them.

Organizations with deep tech resources are going all-in on open-source. They’re building private AI clouds. Others are doubling down on APIs, betting on speed, safety, and simplicity.

The real winner? You. Because now you have real power. Not just to choose a model-but to choose how you run it. And that changes everything.

Susannah Greenwood

I'm a technical writer and AI content strategist based in Asheville, where I translate complex machine learning research into clear, useful stories for product teams and curious readers. I also consult on responsible AI guidelines and produce a weekly newsletter on practical AI workflows.

Popular Articles

7 Comments

Write a comment Cancel reply

About

EHGA is the Education Hub for Generative AI, offering clear guides, tutorials, and curated resources for learners and professionals. Explore ethical frameworks, governance insights, and best practices for responsible AI development and deployment. Stay updated with research summaries, tool reviews, and project-based learning paths. Build practical skills in prompt engineering, model evaluation, and MLOps for generative AI.

Honestly? I've been using Llama 3.1 for internal docs and it's been a game changer. No more worrying about client data leaking into some corporate black box. Sure, the server costs are a beast, but when you're processing 20M tokens a month? It pays for itself. And the peace of mind? Priceless.

Also, no one talks about how much easier it is to debug when you can actually see the weights. API models feel like a black box with a fancy label.

LMAO you guys think open-source is ‘cheaper’? Bro, you’re forgetting the 3 engineers who quit because they couldn’t sleep through the sound of 8 A100s screaming like a banshee in a blender. Managed APIs? They’re the equivalent of buying a Tesla instead of building a car out of scrap metal and hope. You’re not saving money-you’re outsourcing your sanity.

There’s a deeper truth here, buried beneath the benchmarks and cost-per-token spreadsheets. We’re not just choosing models-we’re choosing our relationship with technology. Do we want to own our tools, or be comforted by the illusion of convenience? The API is a seductress, whispering ‘just click here’… but open-source? It asks you to grow up. To build. To fail. To learn. And in that struggle, we become more than users. We become creators.

This is an excellent breakdown. I’d only add that for teams transitioning from legacy systems, the hybrid approach-using APIs for public-facing features and open-source for internal workflows-is often the most sustainable path. It balances innovation with risk mitigation. Also, don’t underestimate the value of auditability in regulated environments. It’s not just compliance-it’s trust.

lol you all are so serious. gpt-4o is 71.7% on swe-bench? that's still like 3 out of 10 bugs fixed. and you think open models are bad? i've seen gpt-4o write code that breaks production just because it 'thought' a variable was optional. open models are just more honest about their failures. also, who even has 8 a100s? this is all rich people fantasy. real devops? we run mistral on a raspberry pi and pray.

Wait, so if I use an open model, I have to babysit a server farm? Ugh. Can't I just… not? I mean, I get the privacy thing, but I just want to write a Slack bot that tells me when my TPS reports are late. Why does this have to be so complicated? Can't we all just… chill?

I think the real takeaway is that neither option is universally better. The best teams I’ve seen use both, strategically. API for customer-facing speed, open-source for internal heavy lifting. It’s not a war-it’s a toolkit. And honestly? The fact that we even have this choice now is kind of amazing.