- Home

- AI & Machine Learning

- Democratization of Software Development Through Vibe Coding: Who Can Build Now

Democratization of Software Development Through Vibe Coding: Who Can Build Now

Imagine describing a complex dashboard to an artificial intelligence, watching it generate the actual lines of code, and then deploying a fully functional web app-all without typing a single character of traditional programming syntax. This isn't science fiction; it's Vibe Coding, a paradigm shifting how we build software today. In the landscape of 2026, this method has fundamentally altered who gets to build digital products. It sits at the crossroads of natural language processing and practical engineering, turning conversation into executable logic.

The shift represents more than just a new tool; it's a redefinition of the barrier to entry for technology creation. For decades, building software required years of learning syntax, debugging environments, and understanding architectural patterns. Now, large language models interpret plain English requests and produce code that works. This capability has triggered what industry analysts call the democratization of software development. It allows people who have always had ideas but lacked technical training to finally become creators.

What Exactly Is Vibe Coding?

Vibe coding is a hybrid development approach combining intuitive natural language input and AI-driven code suggestions to accelerate application creation. Unlike standard no-code platforms that lock you into specific drag-and-drop templates, vibe coding lets you describe functionality, and the system generates actual source code-Python, JavaScript, or TypeScript-that you can inspect and modify if needed. It bridges the gap between the visual simplicity of builders and the flexibility of traditional development.

This methodology relies heavily on sophisticated Large Language Models (LLMs), such as GPT-4o, Google Gemini 2.5 Pro, and Meta Llama. These systems have been specifically fine-tuned to understand developer intent. When you tell an AI agent "create a login form that saves data to Firebase," it doesn't just suggest snippets; it constructs the file structure, writes the authentication logic, and sets up the backend configuration automatically. Companies like Replit and Google Cloud documented this formal workflow by late 2024, categorizing it as a style where users express intention using speech, and the AI transforms thinking into executable code.

Practically speaking, vibe coding operates on two loops. There is the high-level lifecycle for building complete applications-defining the scope, database schema, and UI components-and the low-level iterative loop where you refine specific features through follow-up prompts. It feels less like commanding a robot and more like having a junior developer paired with you in real-time. You focus on the "what" and the "why," while the AI handles the "how." However, this convenience brings significant responsibilities regarding oversight, as the AI acts as a generator, not a certifier of quality.

Who Is Actually Building With Vibe Coding?

The primary beneficiaries of this shift aren't just professional programmers looking to work faster; the demographic has expanded significantly. We see three distinct groups utilizing these tools in the current market.

- Citizen Developers: These are product managers, business analysts, and entrepreneurs who lack formal coding training. They represent about 29% of AI-assisted adoption as of late 2024. For them, vibe coding removes the need to hire expensive dev teams for internal tools or MVPs.

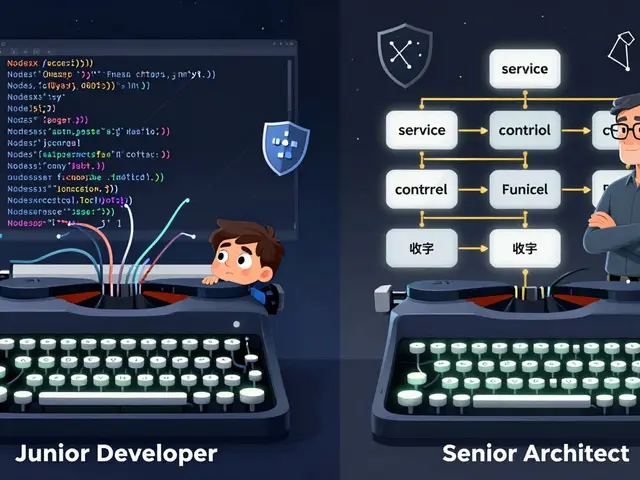

- Professional Developers: Senior engineers use vibe coding to bypass boilerplate. Instead of writing repetitive CSS or database migrations, they describe the desired state. Data shows these developers report completing tasks 55% faster, though they spend significant time reviewing the output for security best practices.

- Educators and Students: Coding curriculums are adapting. Educators are using AI agents to help students focus on logic rather than syntax errors. A study found non-technical users could create basic CRUD apps in 12 hours using guided AI practice, compared to 120+ hours traditionally.

Real-world stories highlight this shift. Non-technical founder Sarah Chen reported saving $45,000 in developer costs by building three internal startup tools using Replit's agent capabilities. She spent days refining prompts instead of weeks waiting for sprints. On Reddit communities dedicated to this topic, users regularly post screenshots of dashboards they built in under an hour by simply iterating on prompts. This accessibility explains why venture capitalists are increasingly greenlighting projects led by domain experts rather than solely technical founders.

Tools and Platforms Powering the Shift

The ecosystem has evolved from simple autocomplete to agentic systems capable of full-stack execution. Here is how the major players compare in the current marketplace.

| Platform | Primary Strength | Integration | Ideal User |

|---|---|---|---|

| GitHub Copilot Workspace | Deep repository context and refactoring | Vs Code, JetBrains | Enterprise Developers |

| Replit Agent | End-to-end app deployment and hosting | Built-in cloud environment | Non-technical Founders |

| Google Gemini Code Assist | Cloud-native integrations (Firebase, GCP) | IDE Plugins | Cloud Architects |

| Wasp Framework | Strict architecture and React integration | Full-Stack Templates | Web Application Builders |

GitHub Copilot remains the heavy hitter for professionals, charging around $10 per user monthly. Its strength lies in knowing your existing codebase context, allowing you to vibe-code new features that adhere to old patterns. However, for someone starting from zero, Replit offers a more "black box" experience. It handles the server provisioning, database connection, and SSL certificates behind the scenes. You simply type your idea, and the environment launches instantly. This one-click deployment removes the infrastructure headaches that usually scare off beginners.

On the enterprise side, Microsoft has integrated similar capabilities into Visual Studio, reporting that 42% of their new prototypes are initiated via AI assistants. These tools are moving beyond chat interfaces to direct IDE editing, meaning changes appear directly in your file editor with diffs you can review. This integration ensures that vibe coding doesn't isolate you from your code but rather accelerates your interaction with it.

Navigating the Risks and Limitations

While the speed gains are impressive, relying entirely on AI generation carries distinct risks. Security is the most critical concern. Reports indicate that approximately 37% of AI-generated code requires modification before it meets production security standards. An AI might create a password field that doesn't hash data or set up an API endpoint with open CORS policies because those weren't explicitly forbidden in your prompt. Human oversight is still mandatory to prevent vulnerabilities.

Maintainability is another factor. Users often complain that while the initial app works, the underlying structure can be messy-often called "spaghetti code"-if not reviewed. Without architectural knowledge, a vibe coder might end up with a project that breaks when trying to add scale later. This creates a "dependency trap" where you can start the project but struggle to finish it or expand it. To mitigate this, many professionals now treat generated code as a draft. They run automated linters, perform unit testing, and refactor critical logic manually before committing to a repository.

Intellectual Property also looms over the sector. Questions remain about who owns the code generated by an AI model trained on public datasets. While copyright law generally protects original human contributions, purely AI-generated assets sit in a gray area. Major litigation cases, such as the GitHub Copilot class action lawsuit, continue to shape the legal framework. Until regulations clarify, enterprises should maintain rigorous documentation showing where human judgment was applied to the final codebase.

The Workflow for Effective Vibe Coding

To succeed in this new environment, you need a structured approach rather than random prompting. Successful practitioners follow a refined workflow that maximizes the AI's potential while managing risk.

- Define Scope Clearly: Start with a feature list, not vague goals. Instead of "make a store," say "build a React shop with Stripe checkout and a MongoDB cart.

- Prompt Iteratively: Don't expect perfection in one go. Use follow-up prompts like "add error handling for failed payments" to refine the output.

- Review Before Deploy: Inspect the generated file structure. Check for hardcoded secrets or missing validation logic.

- Test Locally: Even with cloud previews, running a local instance helps identify environment-specific bugs.

- Document Architecture: Keep a README explaining how the AI constructed the solution for future maintenance.

Developing "prompt engineering" skills is effectively the new literacy for coding. Google's Developer Advocates noted that successful coders spend 30% of their time crafting precise descriptions. This shifts the skill set from memorizing library syntax to articulating system requirements clearly. It is a move from syntactic precision to semantic clarity.

Looking Ahead: The Future of Development

By 2027, predictions suggest that 65% of application development will incorporate AI assistance in some form. We are likely moving toward "Agentic Systems," where the AI doesn't just answer questions but plans, executes, and debugs entire applications with minimal intervention. Early research from Google demonstrates systems capable of autonomous planning. If these reach maturity, the role of the human developer will shift even further from writer to director and editor.

This evolution blurs the line between "technical" and "non-technical" roles. Domain experts will increasingly own the delivery of their solutions. A marketing manager could build their own analytics dashboard; a teacher could build a classroom management tool. The constraint is no longer ability to code, but the ability to define the problem clearly and verify the solution's quality. The industry is heading toward a future where software is as abundant as hardware is scarce, driven by the ability to translate intent directly into reality.

Frequently Asked Questions

Does vibe coding require any programming knowledge?

You can start building immediately without knowing syntax. However, having basic concepts like variables, loops, or APIs helps you verify the AI's work and debug issues effectively.

Can I publish apps made with vibe coding to the App Store?

Yes, many platforms allow you to export mobile-ready code. However, you must ensure the app meets store guidelines regarding privacy and performance, which AI sometimes overlooks.

Is AI-generated code secure enough for enterprise use?

Not without review. Studies show nearly 40% of AI code needs security modifications. Enterprise teams use it for drafts but mandate strict auditing before deployment.

How does vibe coding differ from no-code tools?

No-code uses pre-built visual blocks limited to specific templates. Vibe coding produces actual text code that you can edit, offering much higher customization and control.

Will vibe coding replace software engineers?

It won't replace them, but it will change their role. Engineers will spend more time architecting systems and verifying AI output rather than writing boilerplate code manually.

Susannah Greenwood

I'm a technical writer and AI content strategist based in Asheville, where I translate complex machine learning research into clear, useful stories for product teams and curious readers. I also consult on responsible AI guidelines and produce a weekly newsletter on practical AI workflows.

Popular Articles

5 Comments

Write a comment Cancel reply

About

EHGA is the Education Hub for Generative AI, offering clear guides, tutorials, and curated resources for learners and professionals. Explore ethical frameworks, governance insights, and best practices for responsible AI development and deployment. Stay updated with research summaries, tool reviews, and project-based learning paths. Build practical skills in prompt engineering, model evaluation, and MLOps for generative AI.

The distinction between defining requirements and executing logic is blurring with these new interfaces. We often forget that programming was always about communication rather than rote memorisation. The terminology used here seems precise enough for now but definitions will surely shift. I suspect the industry will need standardised protocols for prompt engineering soon. Otherwise we risk creating technical debt that no legacy developer understands.

This trend represents a fundamental degradation of the craft we once held sacred. For decades, the struggle against the compiler forged the true engineer into something superior to mere idea men. Now any amateur with a vague notion believes they possess the right to touch production infrastructure. They speak of vibes and feelings instead of rigorous architecture and memory management. It creates a deluge of spaghetti code that will haunt us until the systems inevitably collapse. True mastery requires blood and sweat in the terminal, not conversational whimsy. If you cannot debug a segmentation fault, do not claim to own the machine. We are lowering the bar to sand level and expecting skyscrapers to emerge. The romantic notion of creation is being commodified into cheap content generation. Real developers know that understanding the binary flow is what separates architects from janitors. This tool might help a manager build a form, but it cannot replace the intuition of ten years experience. There is nothing honourable about outsourcing your intellect to a black box model. The fragility of these applications will become apparent when the cloud provider decides to shut down the service. Users will blame the developer when the API keys rotate without warning. We must resist the allure of lazy convenience and demand excellence from our peers.

One must ponder the ethical implications of shifting labour to synthetic agents. It is indeed progress only if the human element remains part of the equation. We are witnessing a profound transformation of agency itself. The moral weight of a system failure lies in the prompter's hands now. It is a dangerous game to delegate consequence to algorithms that feel nothing. ; One must consider the soul of the work. The machine does not dream when it compiles. Therefore the burden remains entirely upon the operator who initiates the sequence. This responsibility cannot be brushed aside as merely technical overhead.

I feel like everyone is missing the bigger picture here and it actually feels amazing. The idea that anyone could create something meaningful with just their thoughts is incredibly inspiring to me. I imagine my grandmother finally building her garden tracking app without needing to hire someone expensive. It opens up possibilities for creativity that were locked behind paywalls for way too long. We should focus on the potential rather than the elitist arguments against it. Technology evolves and adapts to include more voices in the conversation every day. I am genuinely excited to see what kind of wild projects people launch next week. It really does change the landscape of who gets to tell their story digitally. There is so much energy coming from the community already trying these tools out daily. I want to see the future of innovation unfold without barriers holding us back completely.

You shuld really try this before deciding its impossible.