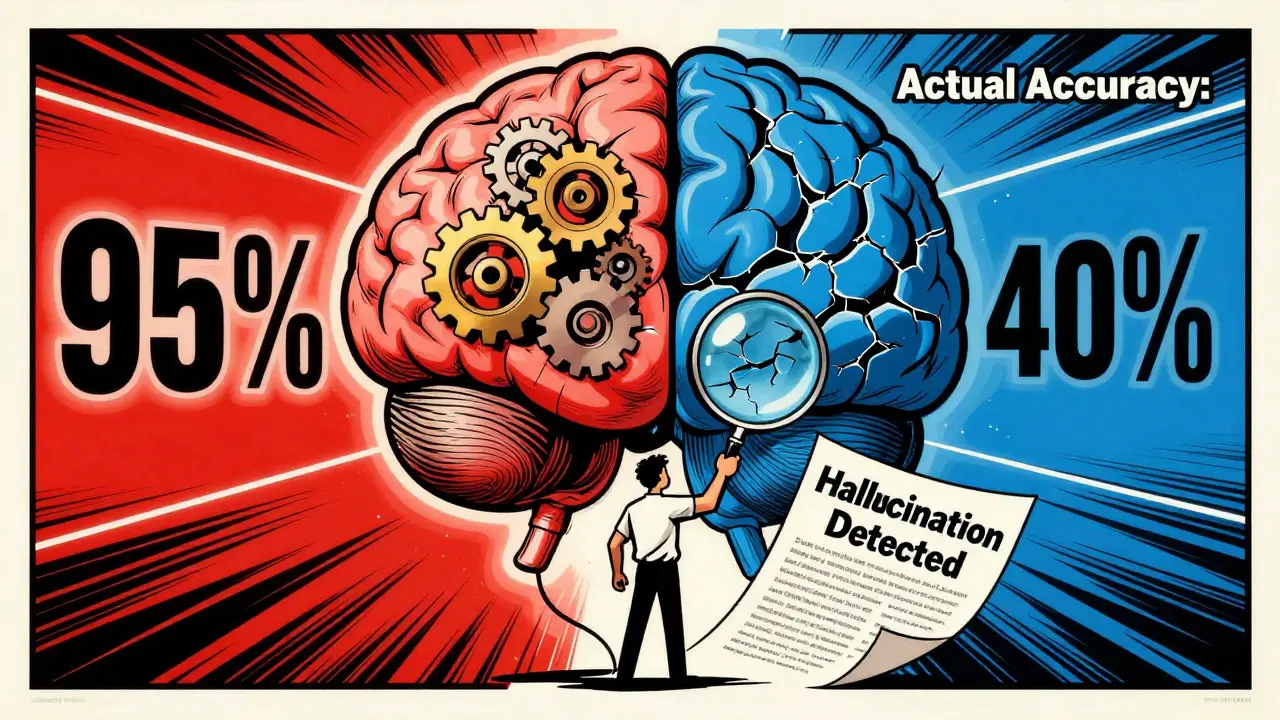

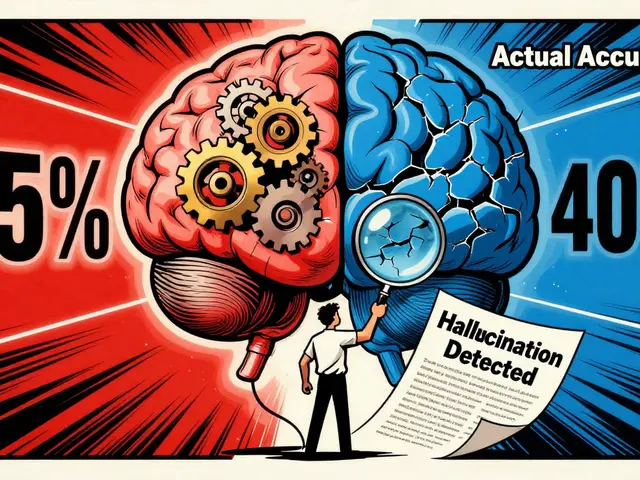

Calibrating Generative AI Models to Reduce Hallucinations and Boost Trust

Calibrating generative AI models ensures their confidence levels match real accuracy, reducing hallucinations and building trust. Learn how new techniques like CGM, LITCAB, and verbalized confidence make AI more honest and reliable.